This quickstart uses Agent Builder to scaffold the agent, then Web Calling to embed it. Available to project admins on enterprise clusters and to free-trial accounts. Free-trial users can switch to the full enterprise quickstart via the Free trial — exit pill.

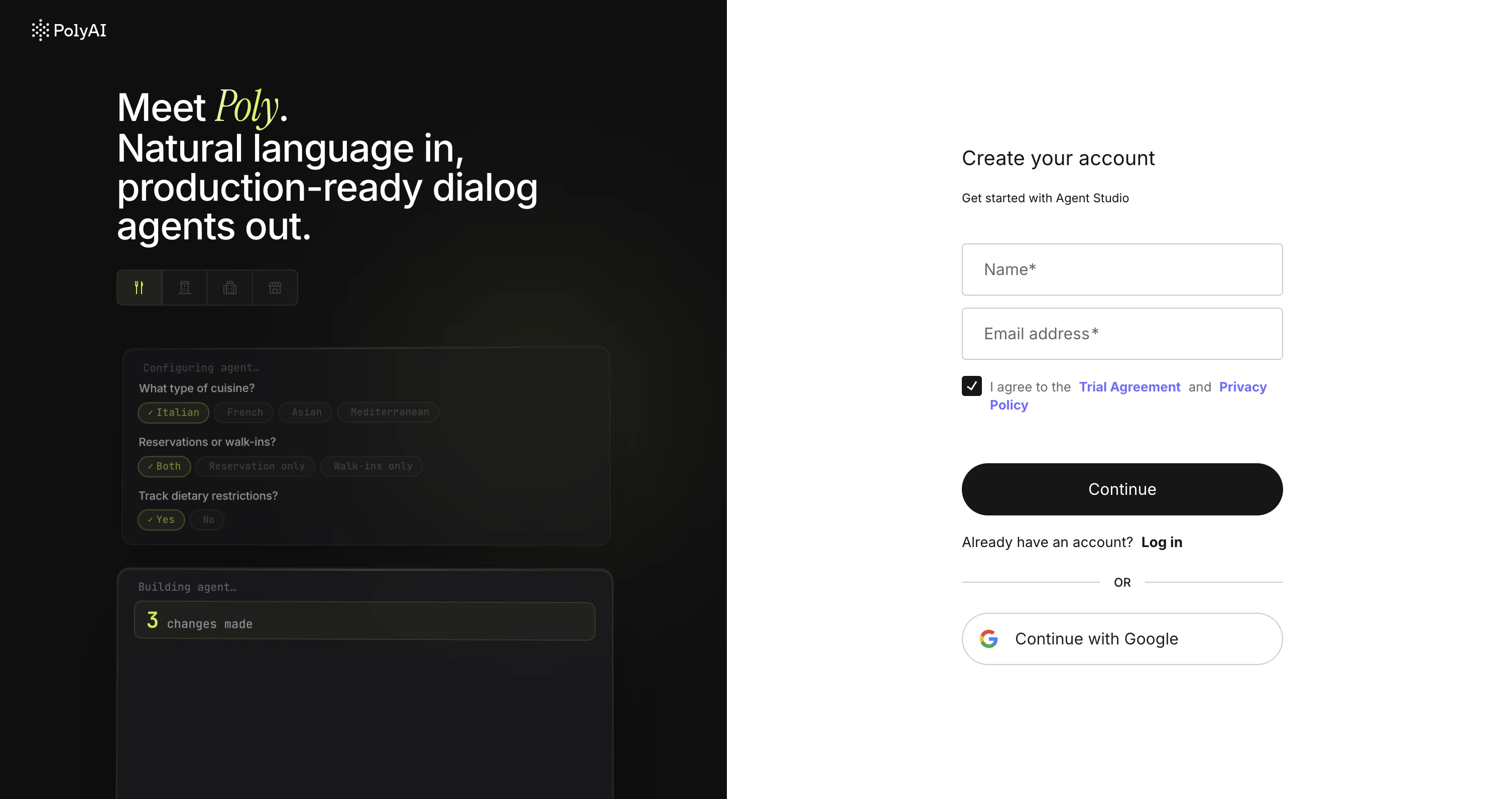

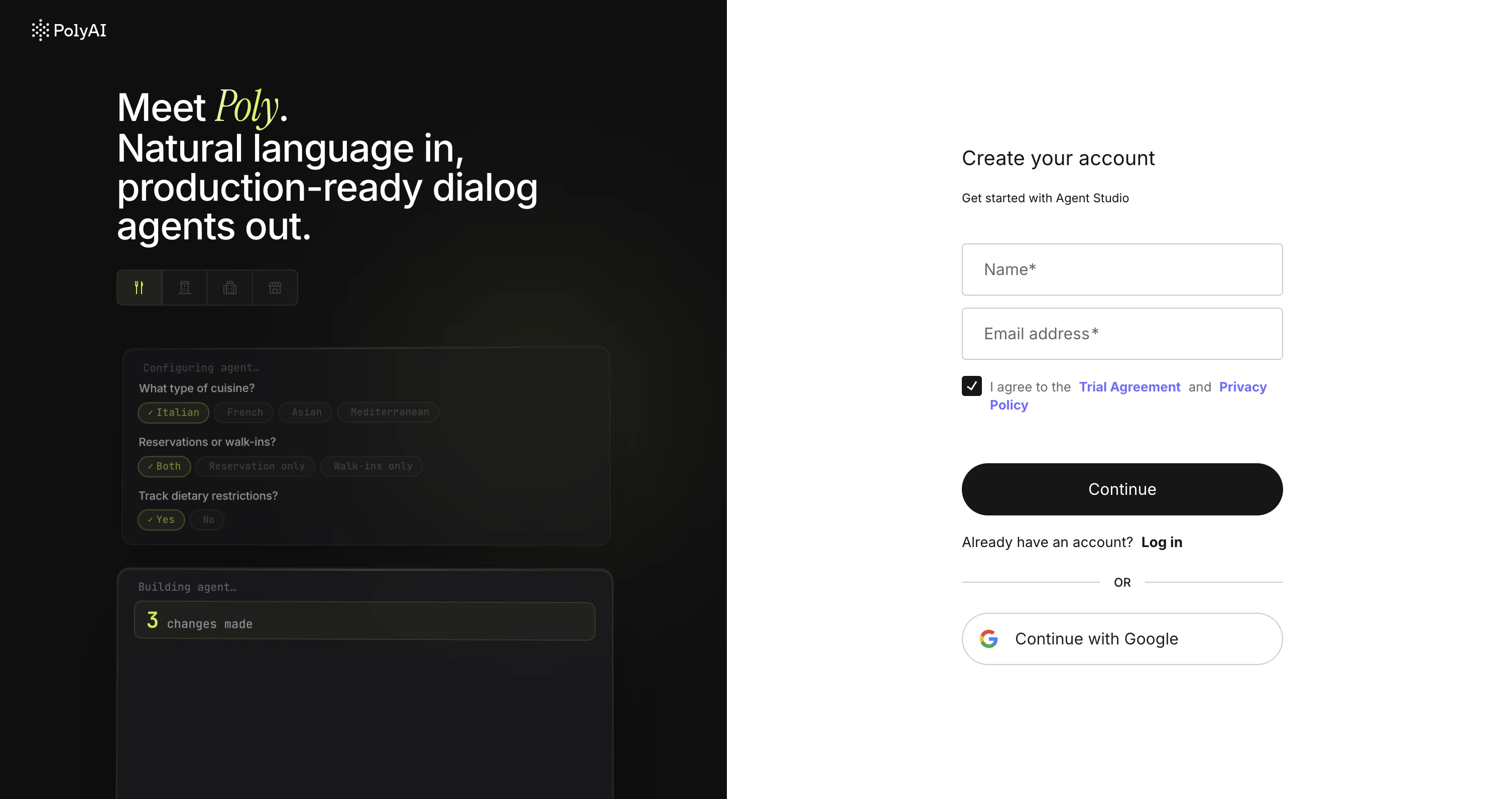

Create your account (~1 min)

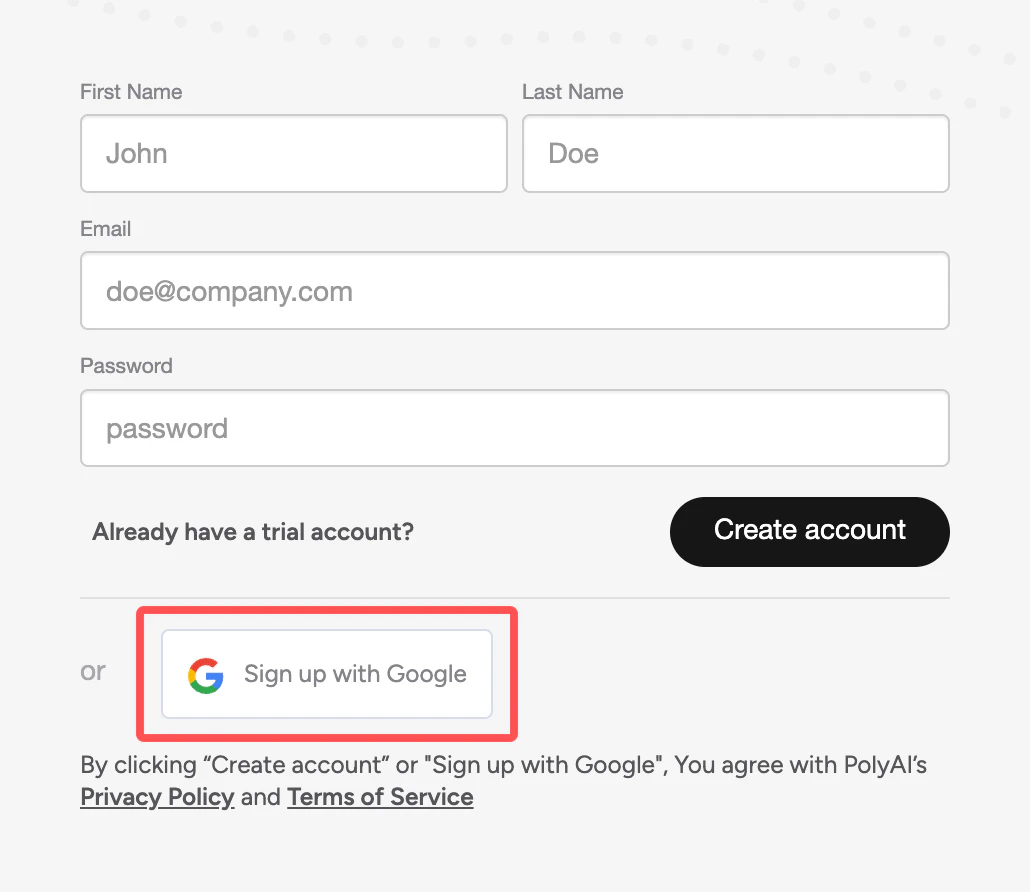

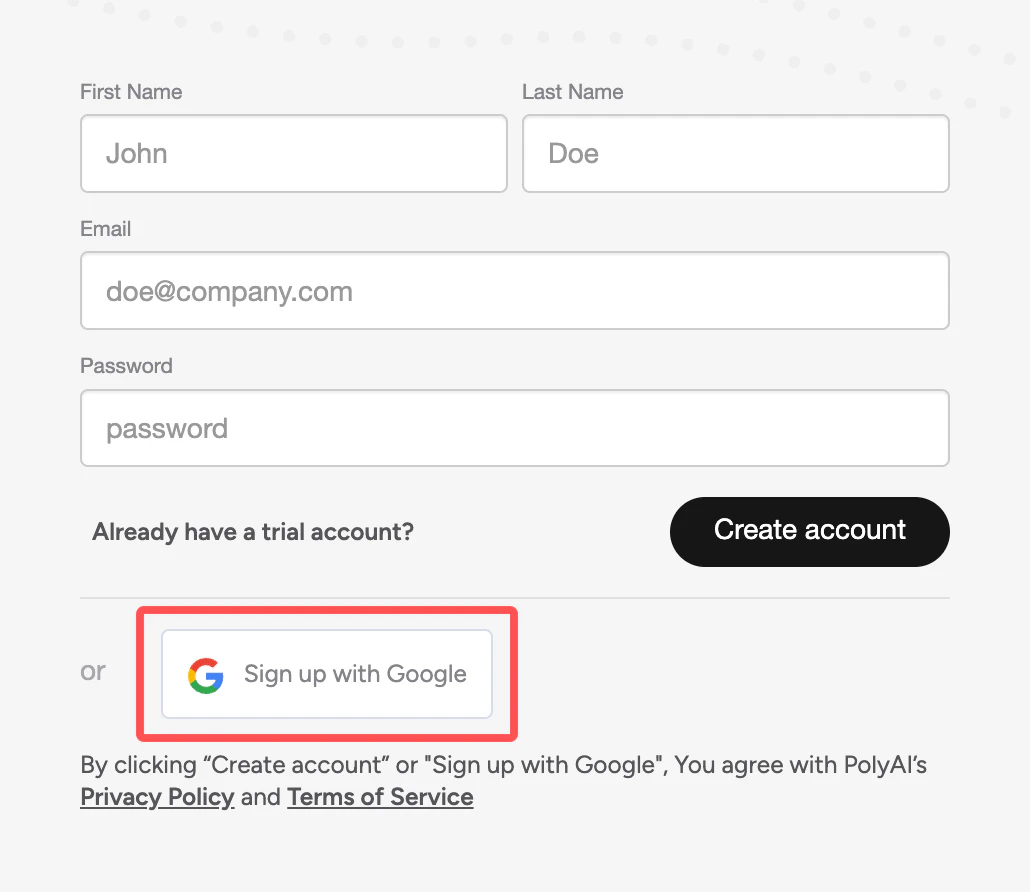

Go to studio.poly.ai and sign up with your email or Google account. No sales call to start.

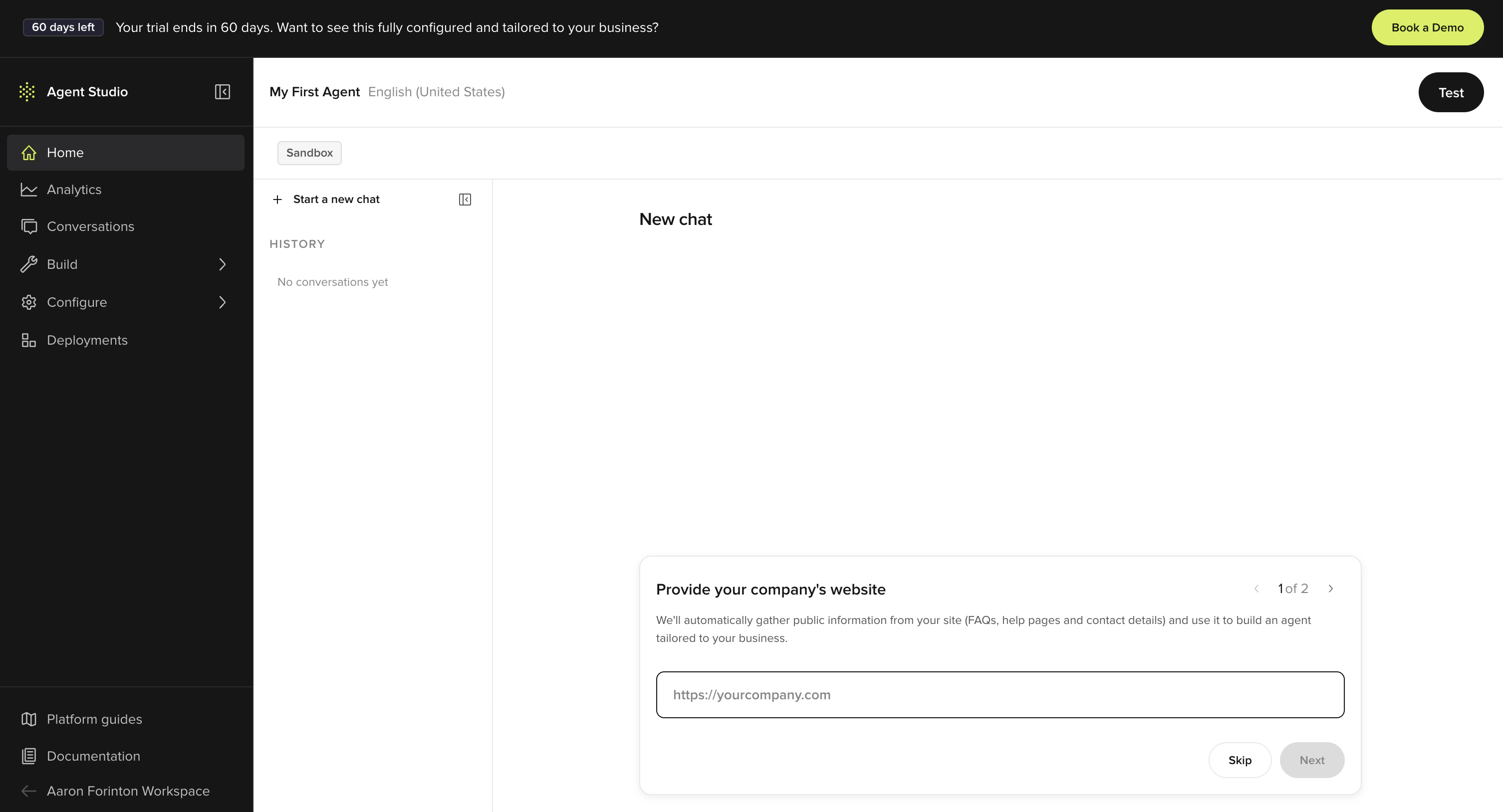

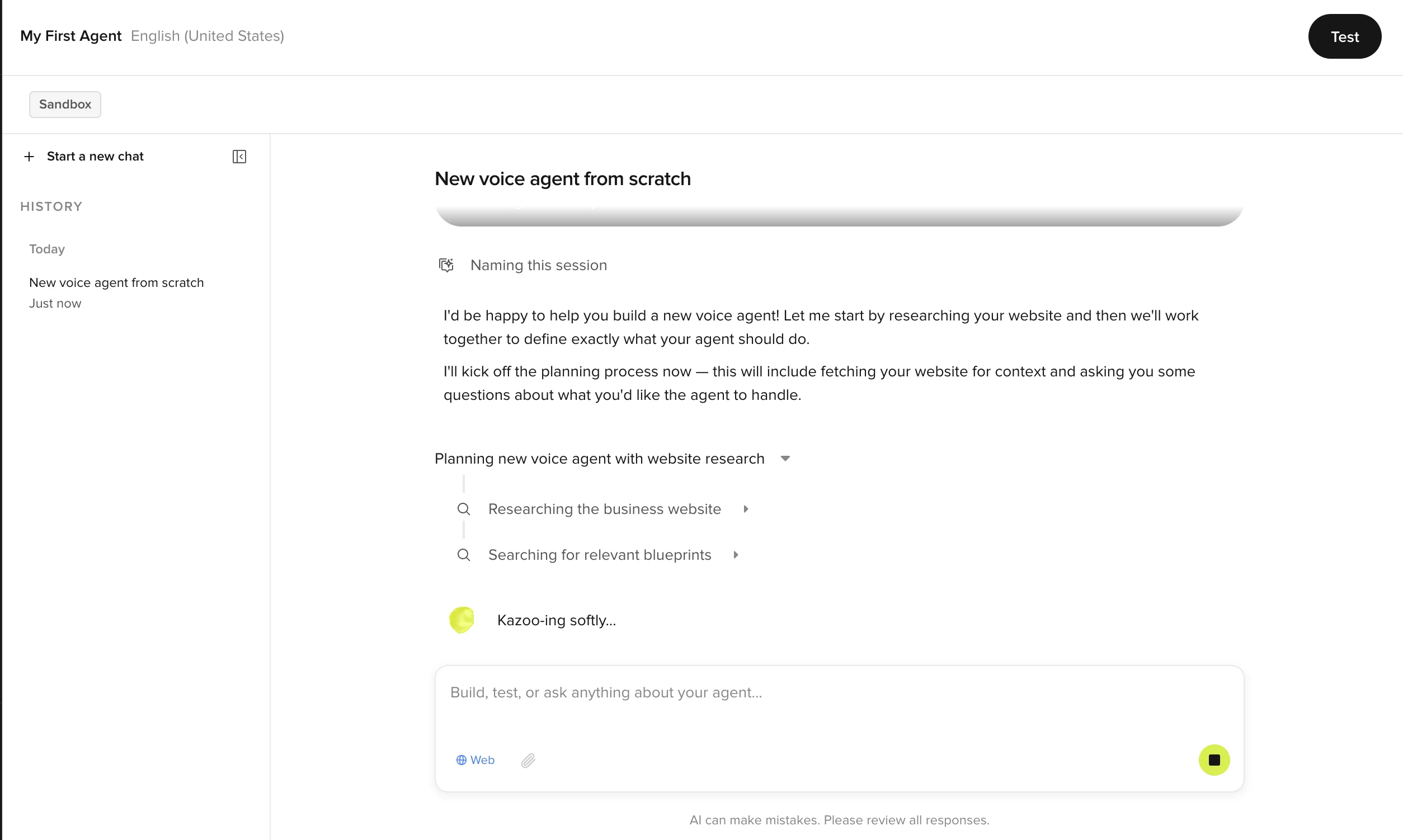

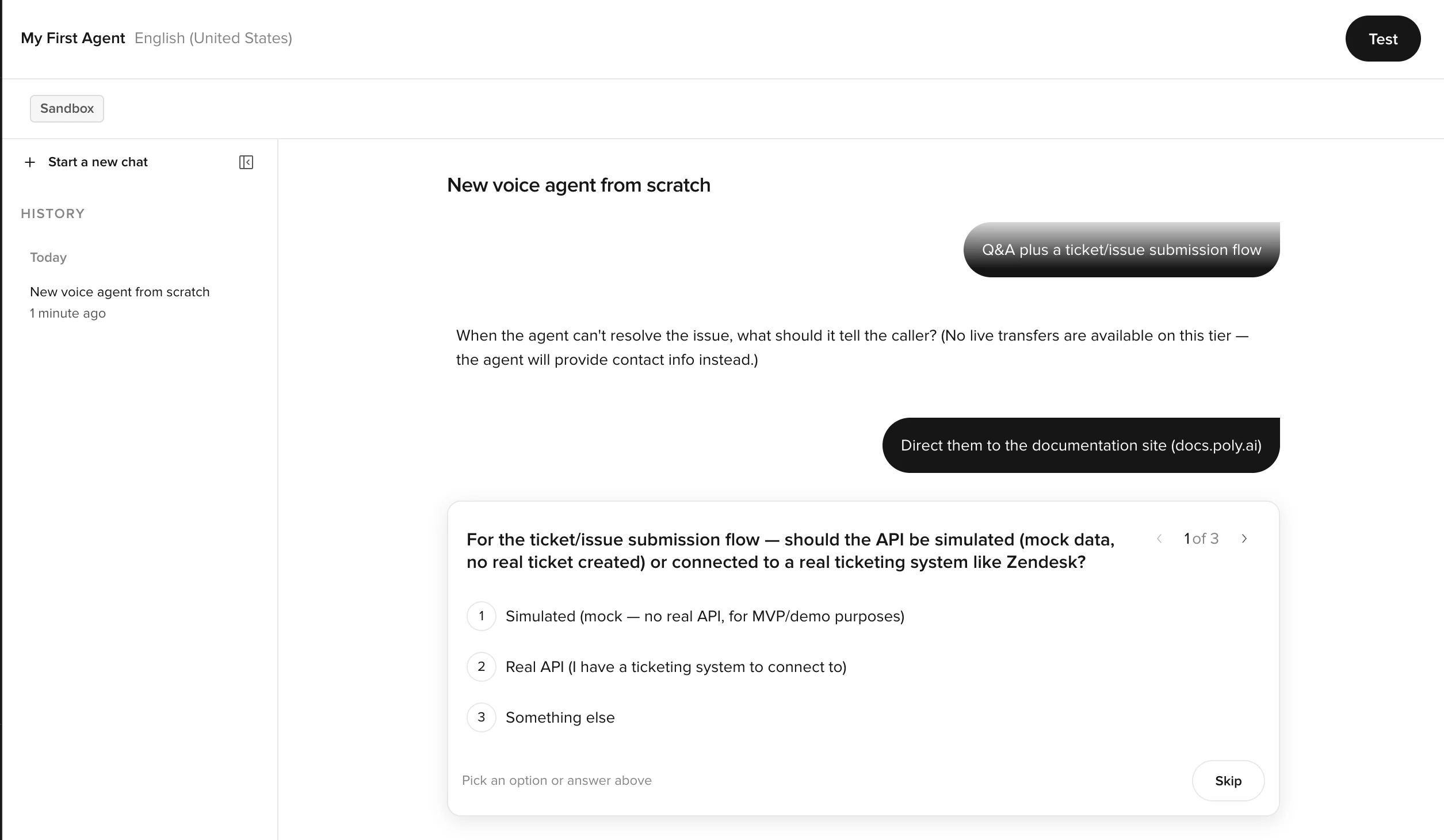

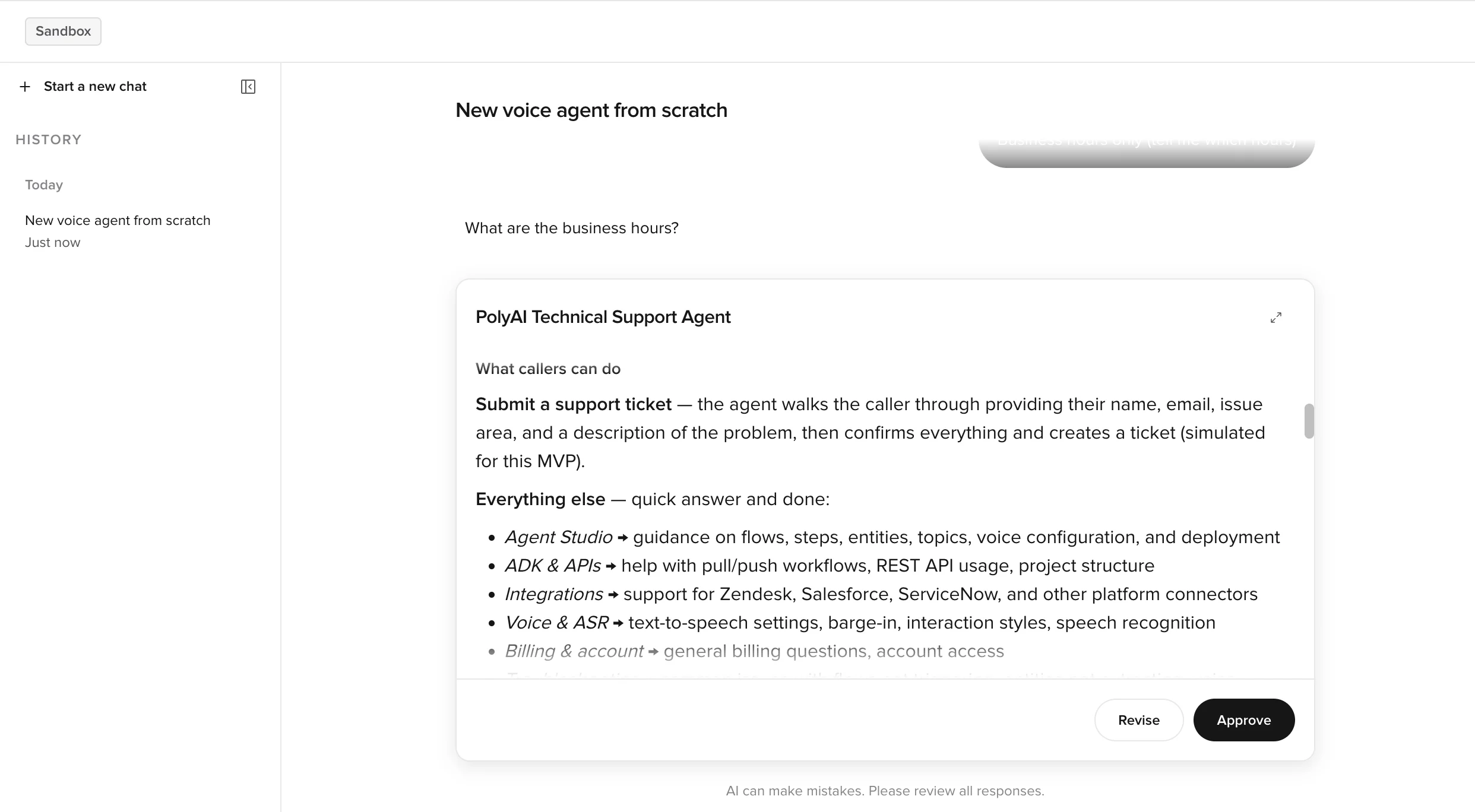

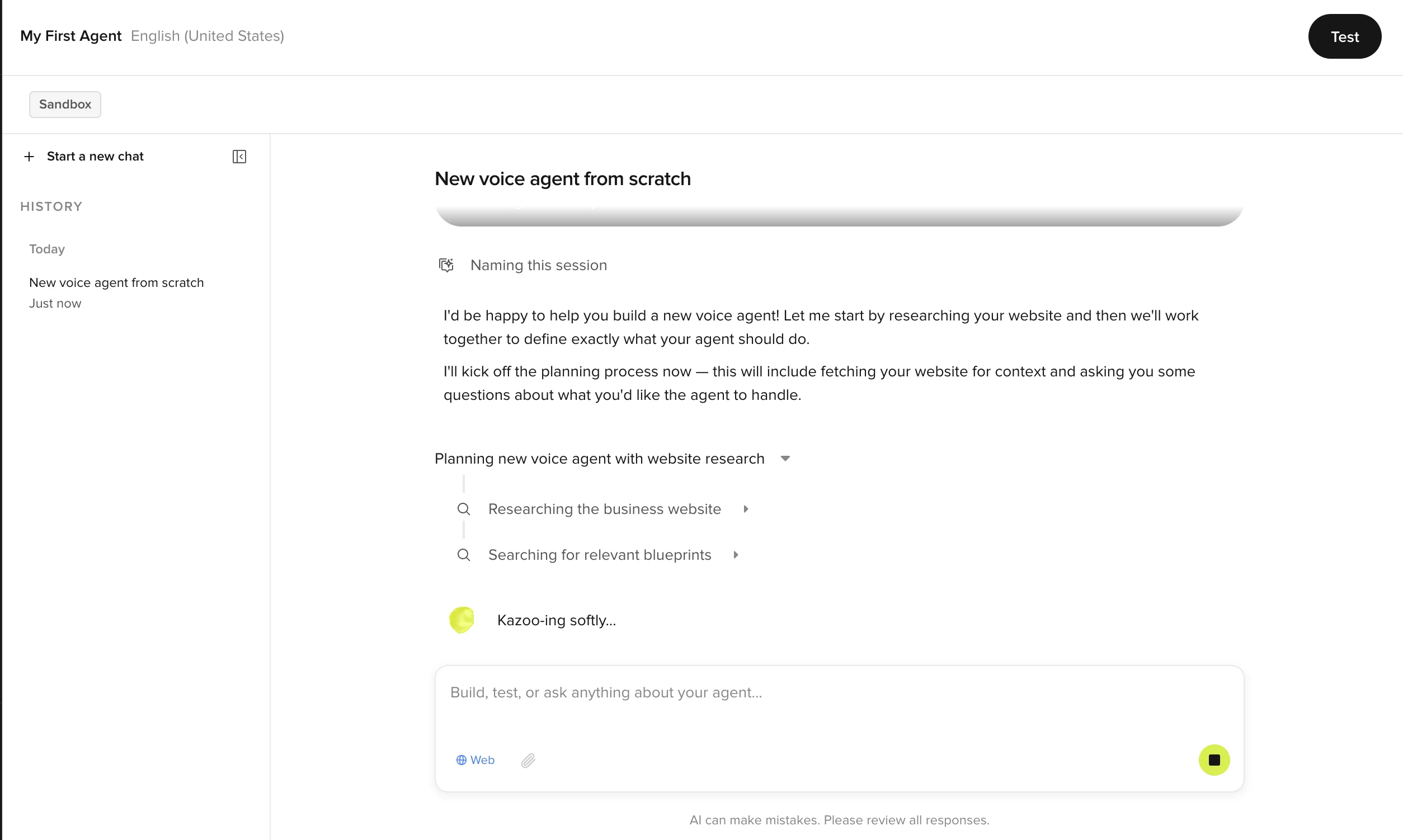

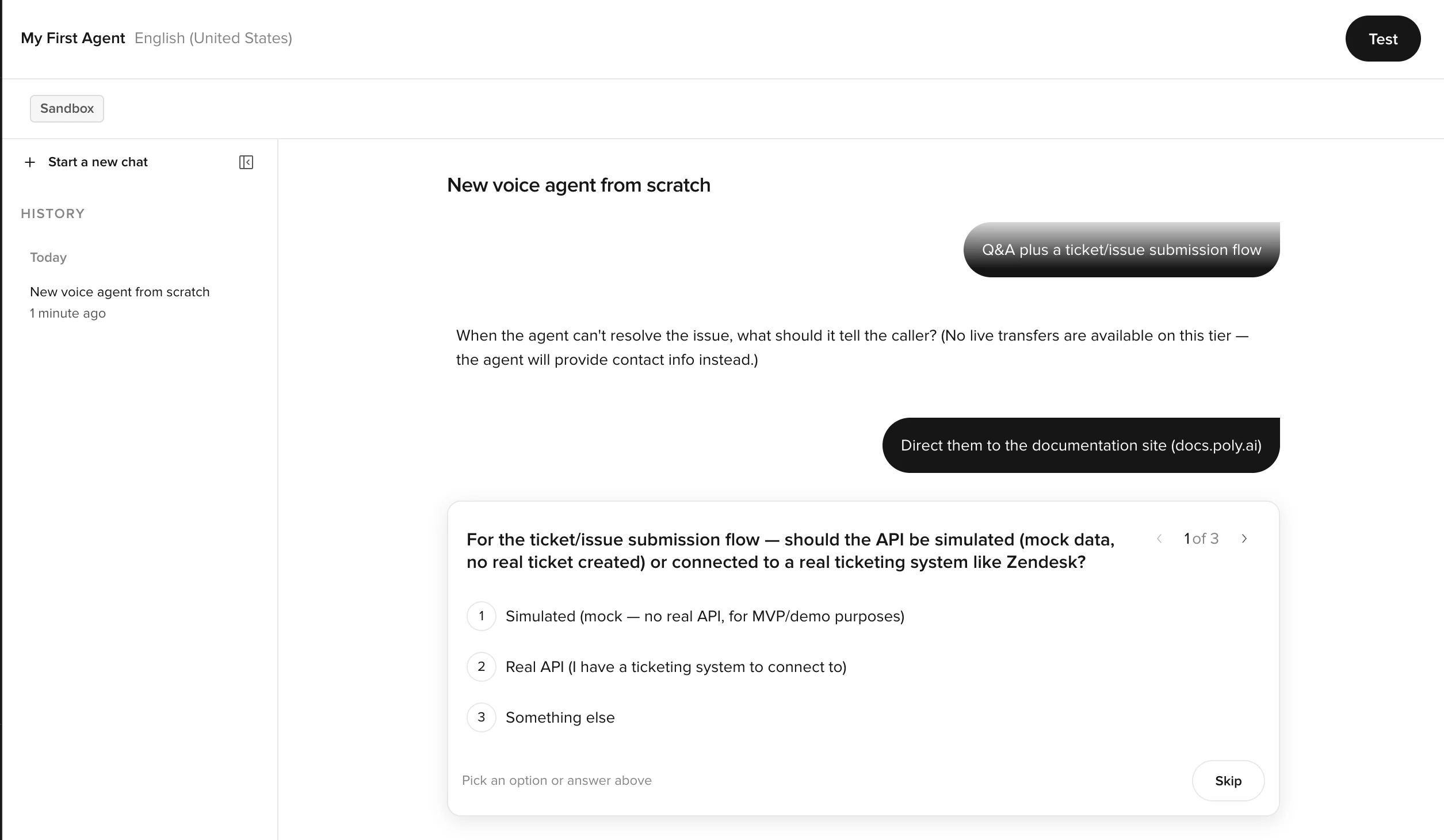

Describe your agent (~3 min)

Tell Agent Builder what you want to build. A URL, a document, or a sentence describing the business is enough to get started.Example prompts:

Build a reservations agent for a restaurant. Take name, party size, date, and time, then confirm.Read https://example.com and build an agent that answers questions about the company.I run a clinic. Build an agent that handles appointment requests and FAQ about opening hours.

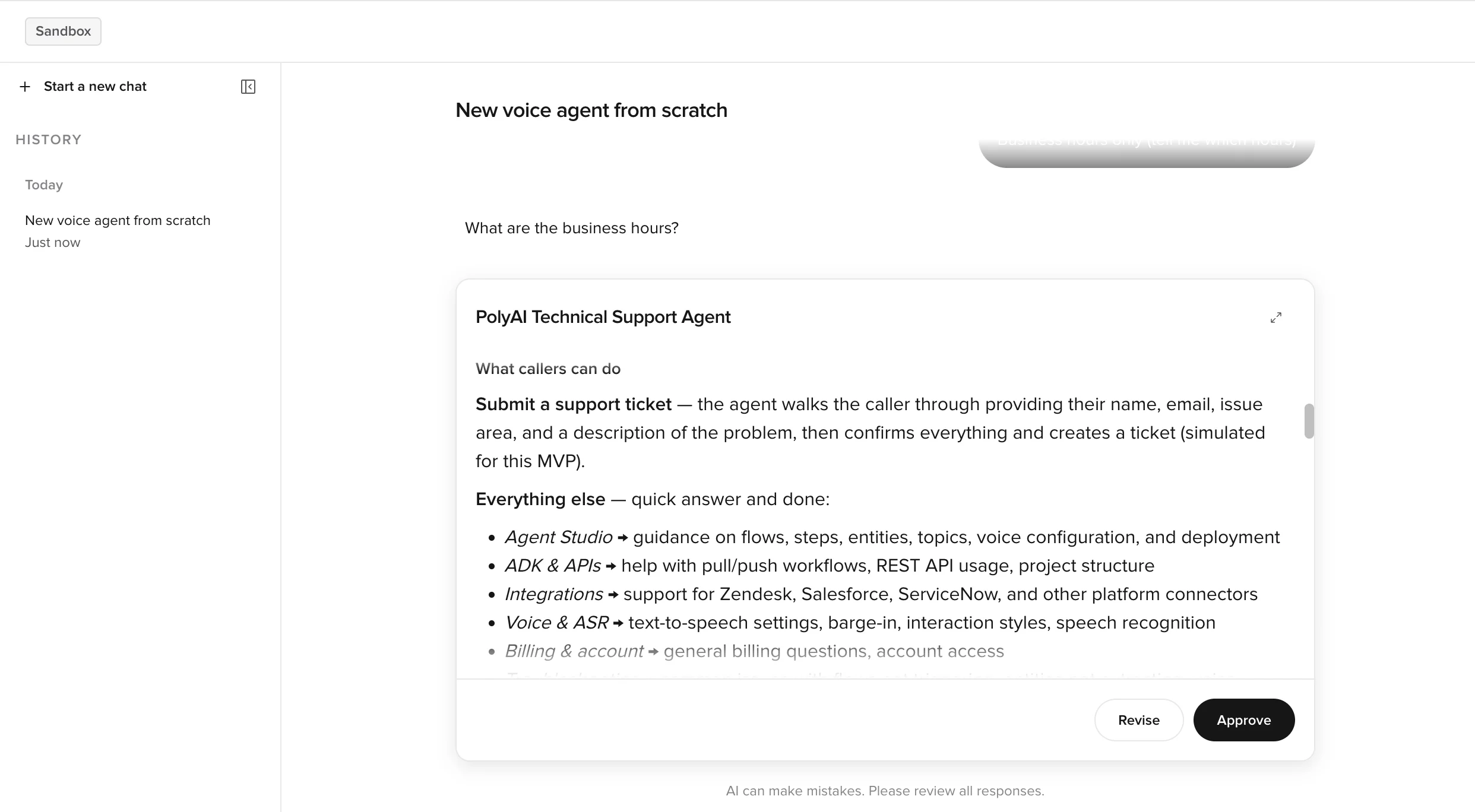

Refine knowledge and behavior (~3 min)

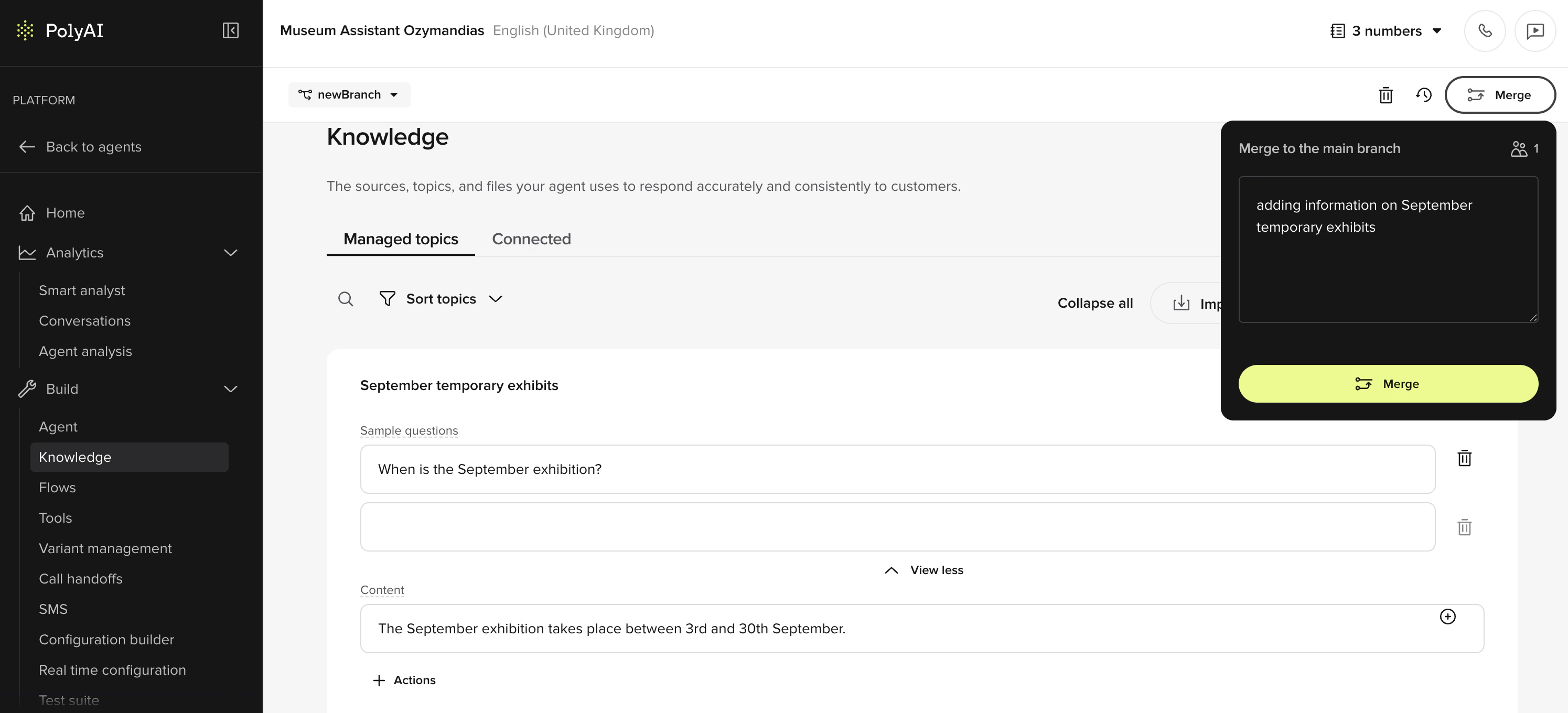

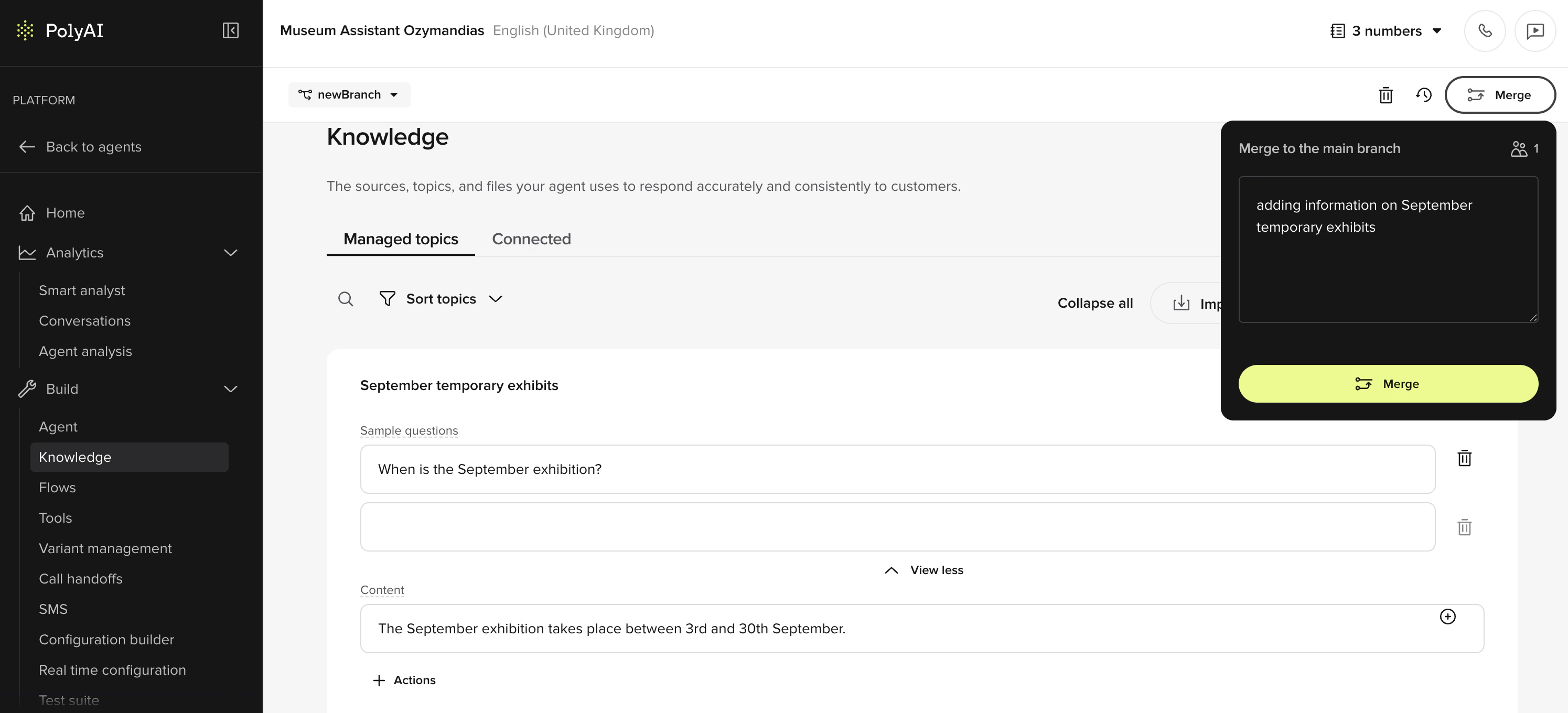

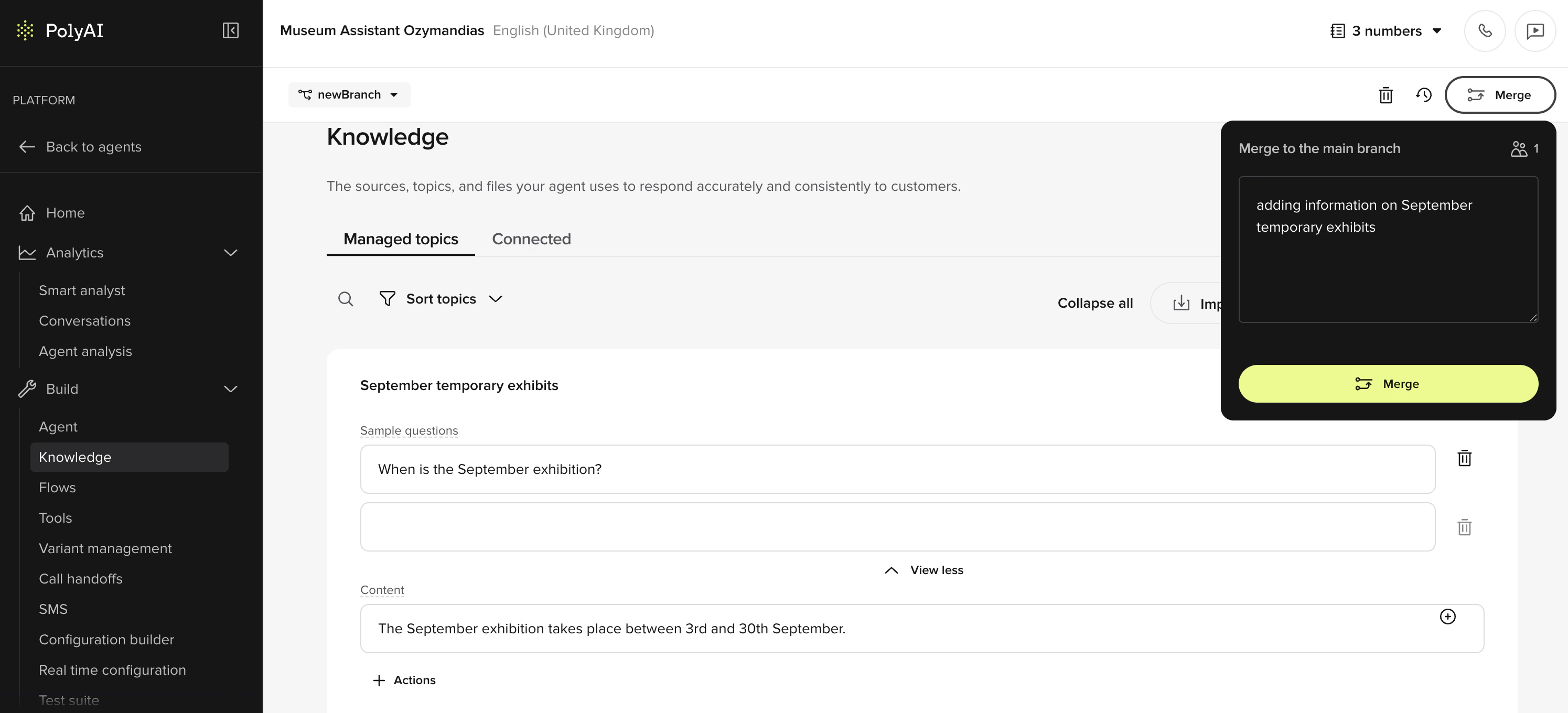

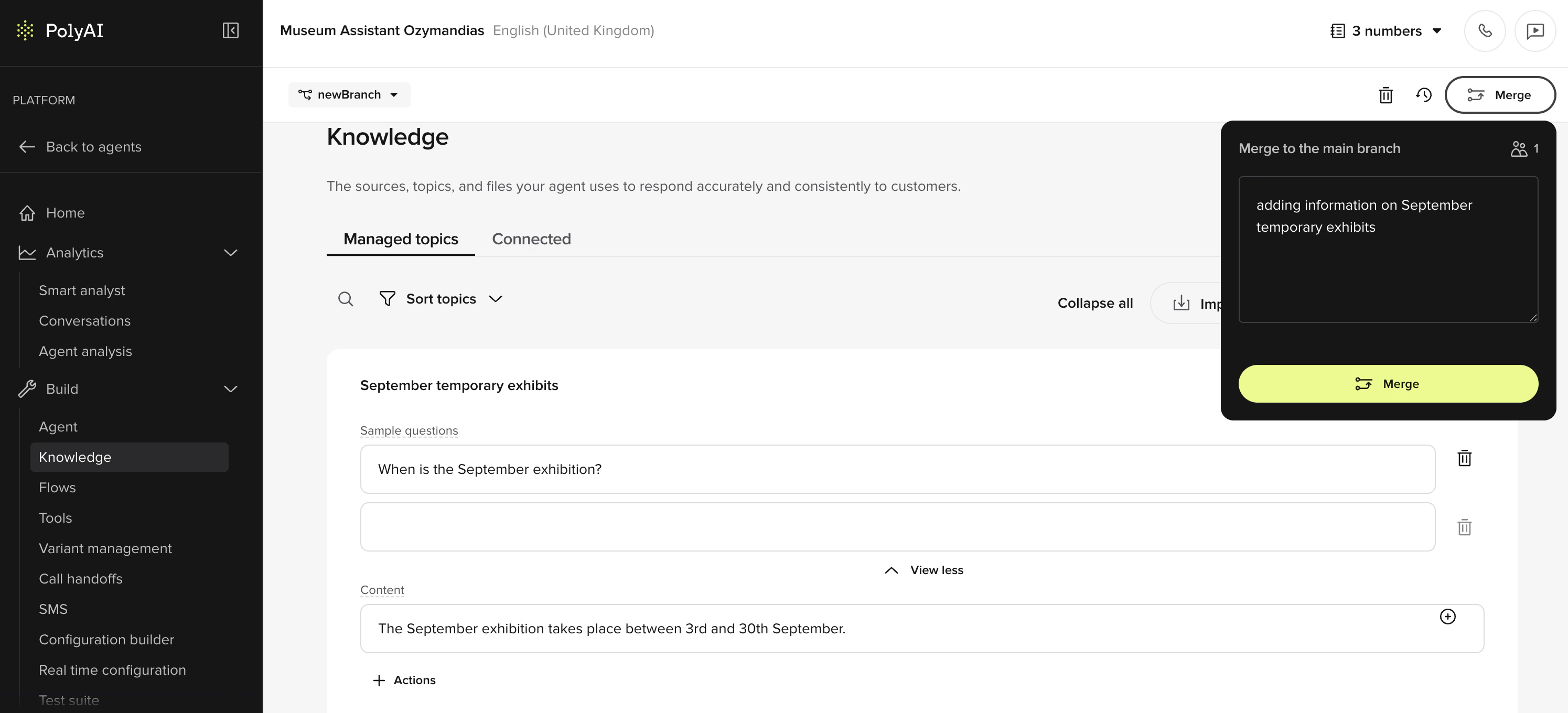

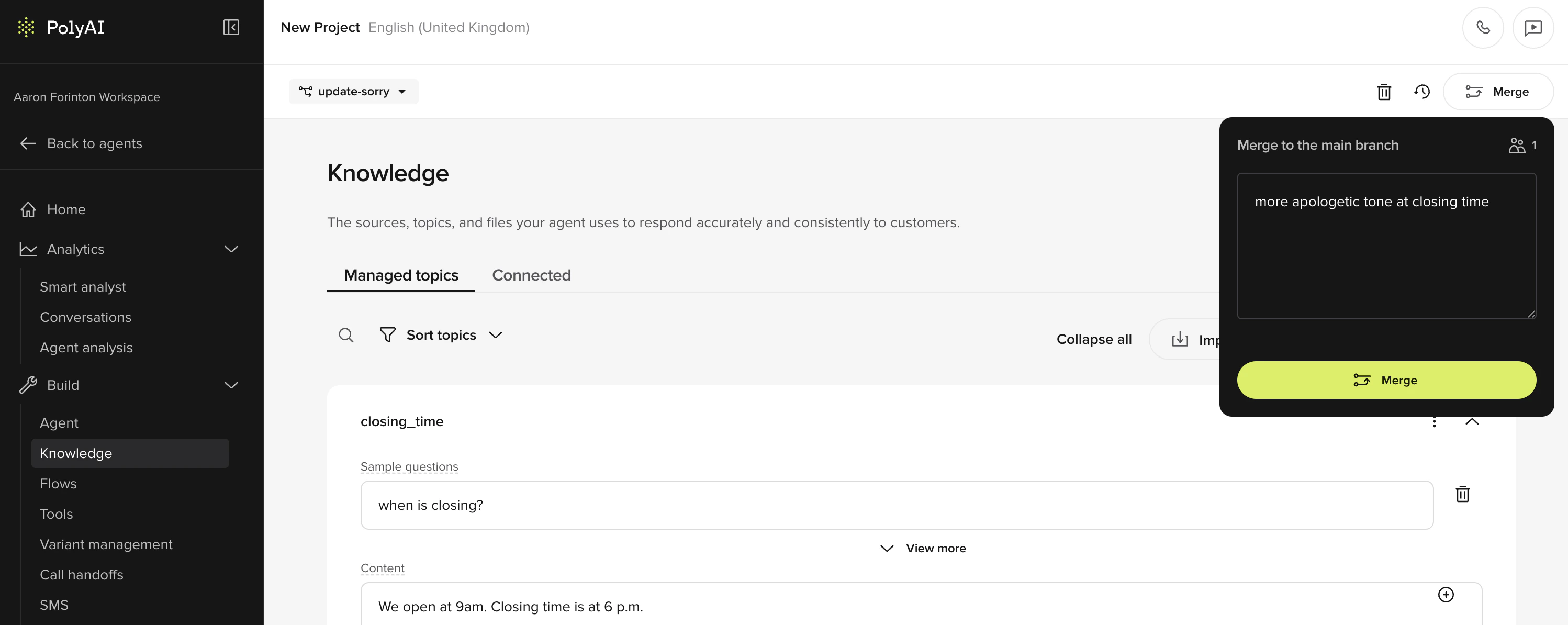

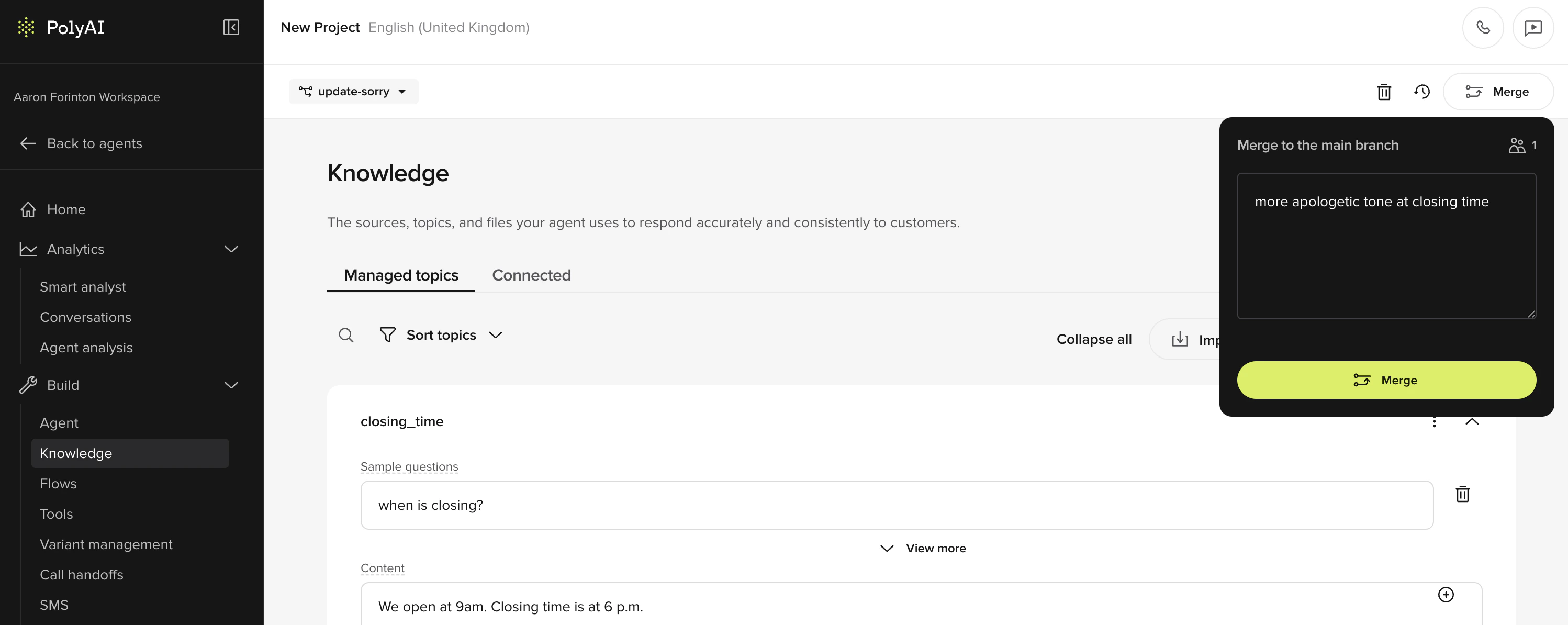

Agent Builder will have populated Build > Knowledge > Managed Topics based on what you gave it. Open it and skim:

- Topics: each is a question/answer pair grouped by intent. Edit any topic to tighten the answer or add sample utterances.

- Sources: upload extra PDFs or paste URLs to expand the knowledge base. Agent Builder turns them into topics automatically.

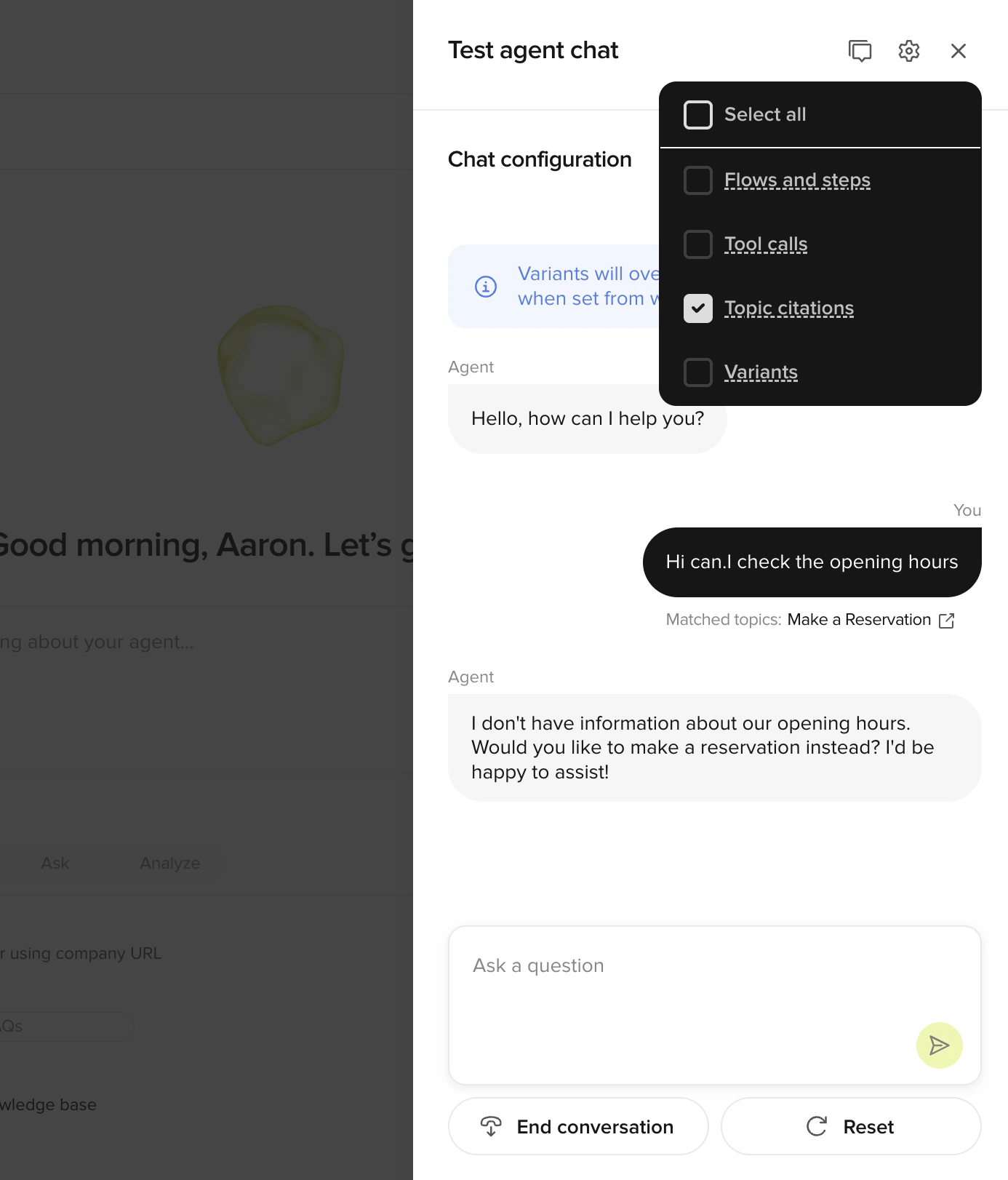

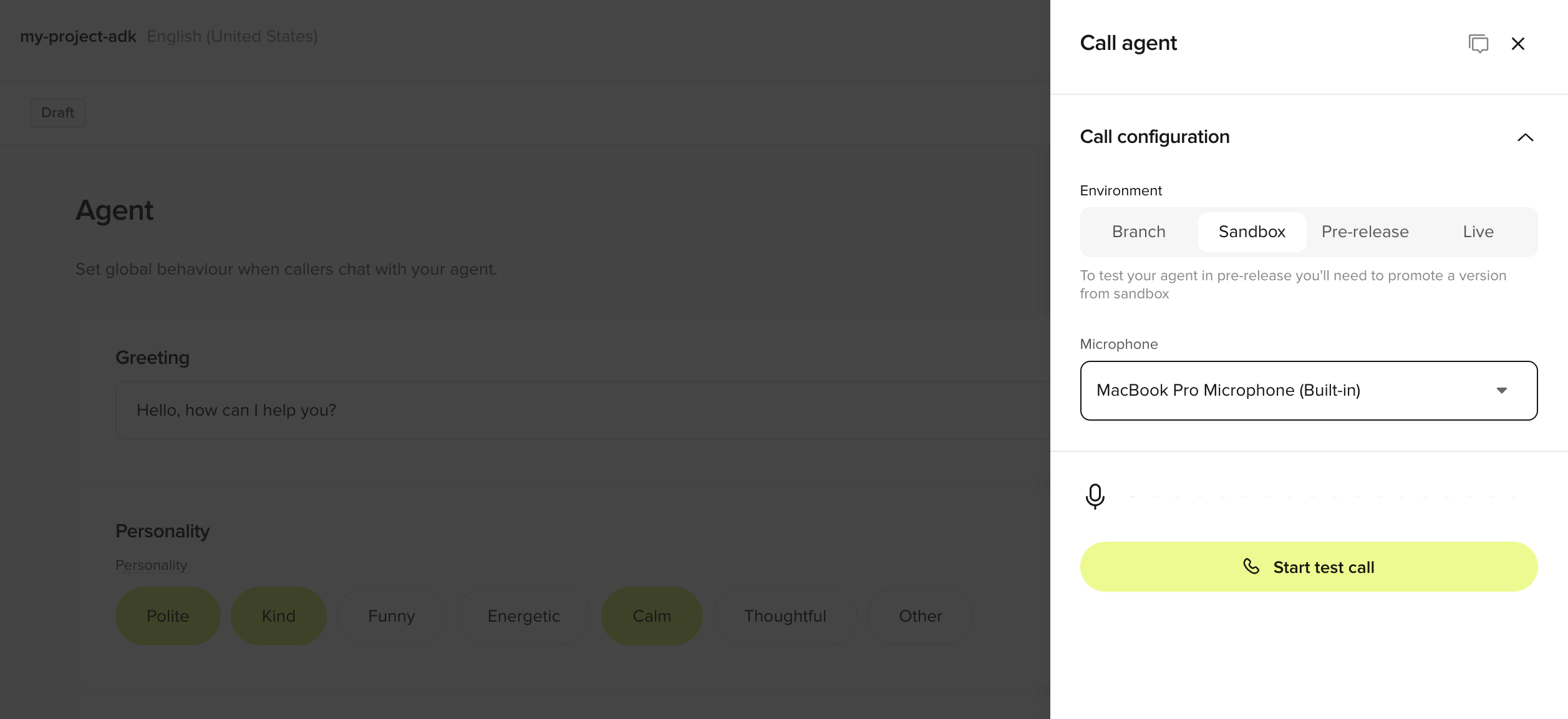

Test in-browser (~2 min)

- In-browser call

- Webchat preview

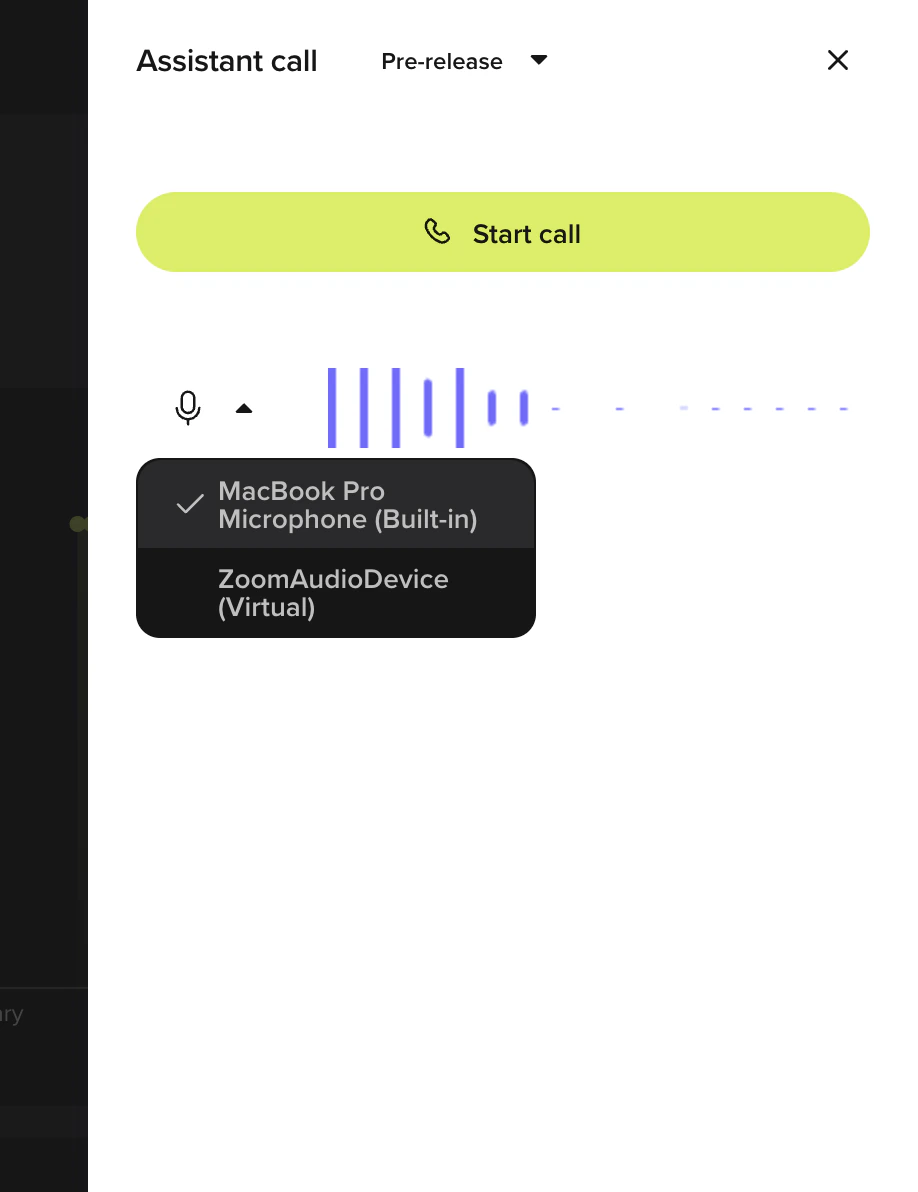

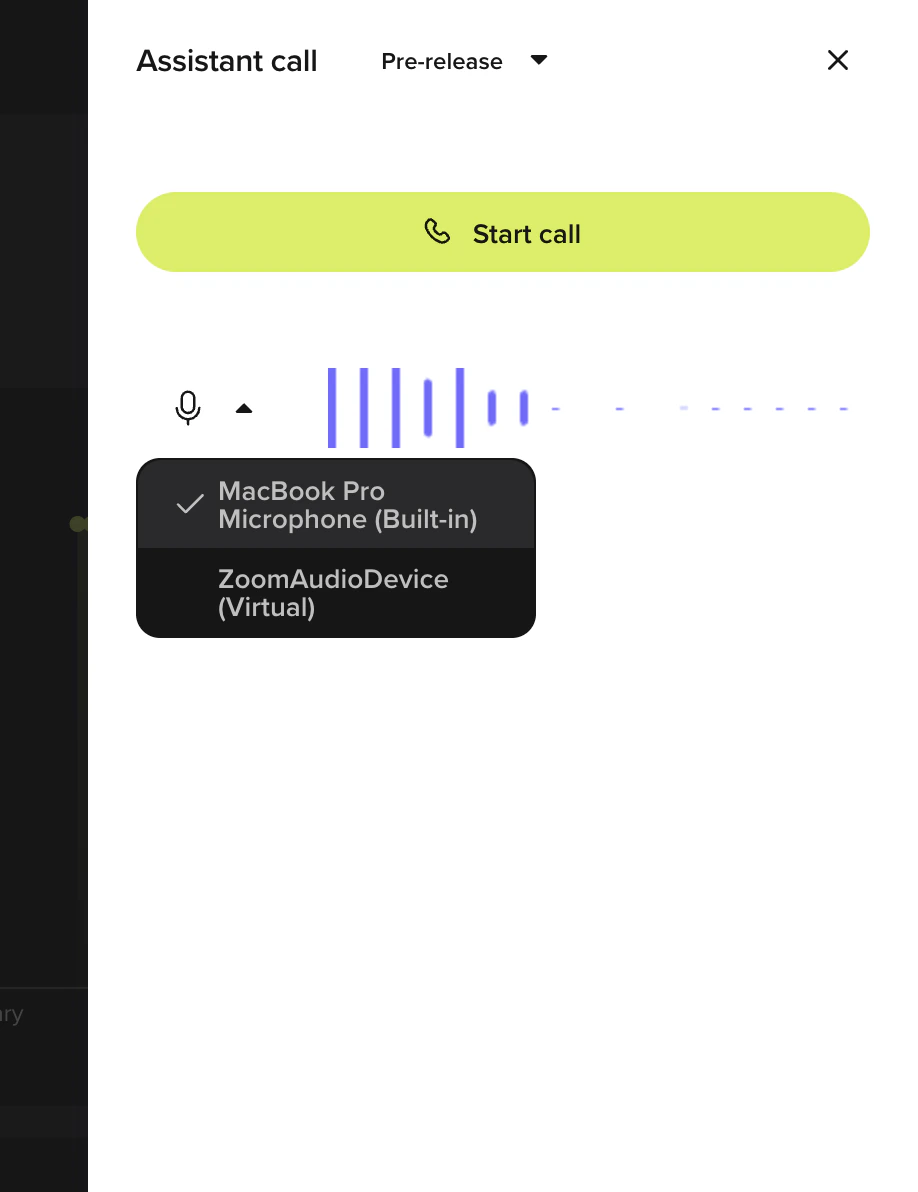

The fastest way to test. Uses your device’s microphone over WebRTC. No number to dial, no app to install.

- Click Test in the top-right corner to open the Agent Debugging side panel

- Choose Call agent under Voice

- Select Sandbox from the environment dropdown

- Begin speaking to your agent

Embed or share (~1 min)

Publish your agent and put it in front of users.Promote through environments when you’re ready:

- Web Calling widget

Embed a click-to-call voice widget on any website. WebRTC end-to-end, no number to dial, no app to install.

- Go to Configure > Web Calling in the sidebar

- Copy the embed snippet

- Paste it into your site’s HTML

- Go to Deployments > Environments in the sidebar

- Click Promote to Live to make the current Sandbox build production-ready

Next steps

Master Agent Builder

Prompt patterns and the plan-review workflow.

Embed Web Calling

Voice widget setup, channel-mix matrix, and styling.

Build flows

Multi-step workflows for bookings, forms, and structured tasks.

Build locally with the ADK

Pull your project as YAML and Python, edit anywhere, push back.

Create an account, build an agent, add knowledge, test it, and deploy. Five steps.Testing tips:

Prerequisites

- A use case in mind (e.g., customer support, reservations, FAQ)

Create your account (~1 min)

Go to the sign-up page and create your PolyAI account.

- Google SSO

- Email and password

- Click “Sign up with Google”

- Select your Google account or enter your credentials

- Click “Continue” to authorize PolyAI

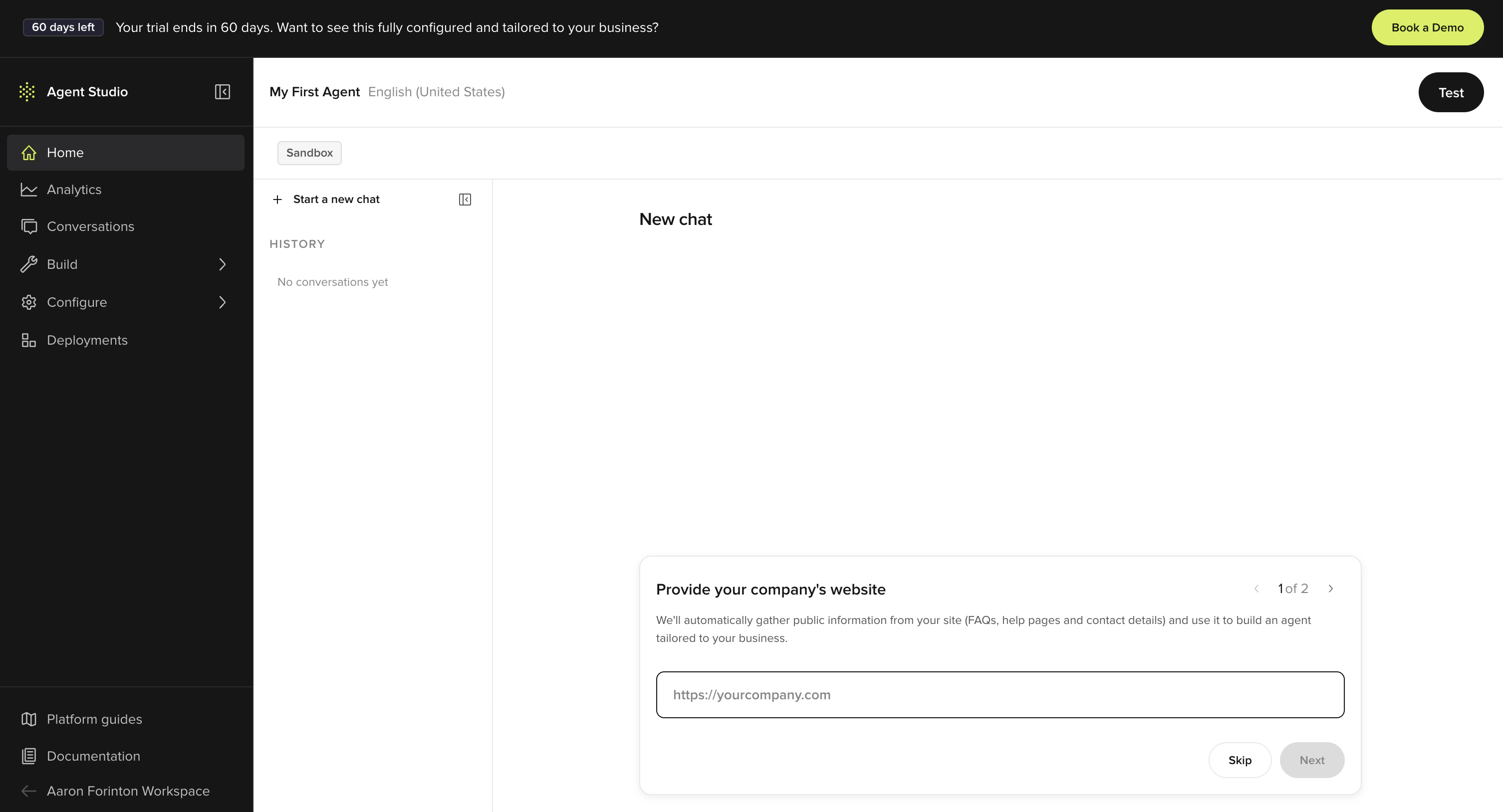

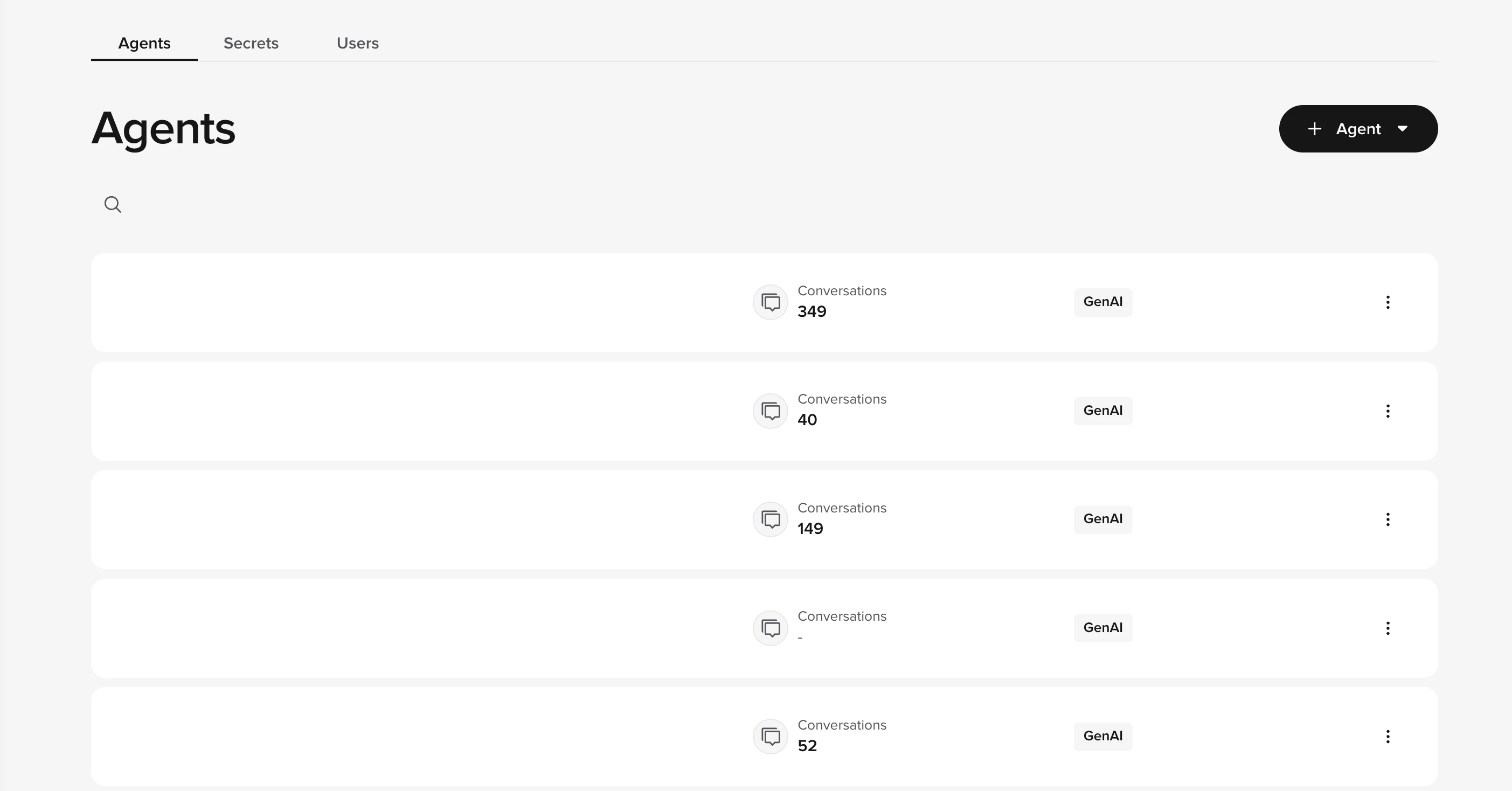

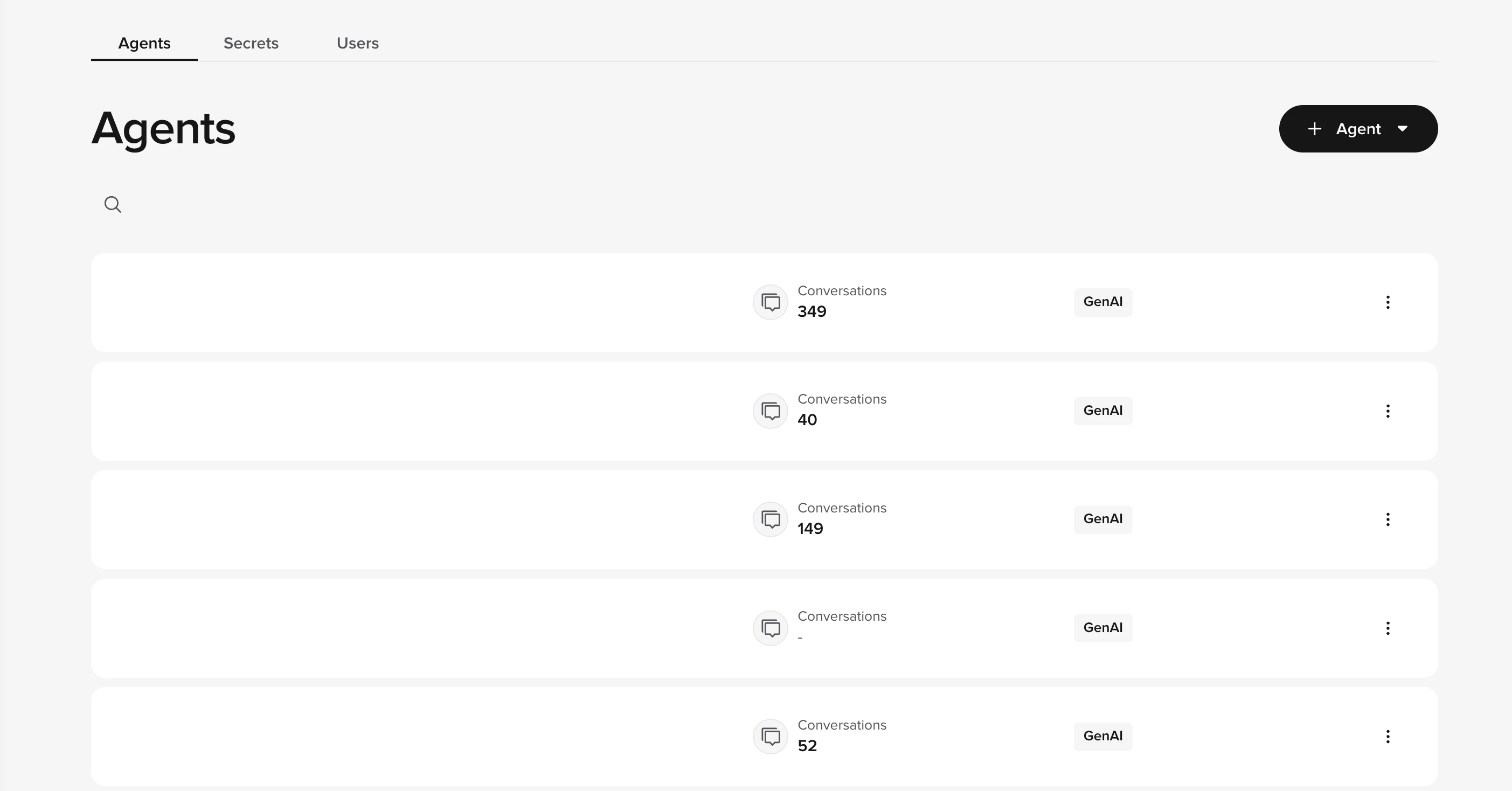

Create your agent (~2 min)

From the home page, click + Agent to start the agent creation wizard. You can create a blank agent or import an existing configuration.Configure the basics:

- Agent name, internal identifier for your project

- Response language, primary language for responses (see multilingual support for additional languages)

- Voice, select from available text-to-speech (TTS) voices

- Welcome greeting, first message users receive (can be customized later in agent settings)

Add knowledge (~5 min)

Navigate to Build > Knowledge > Managed Topics in the sidebar.Click Add topic and provide:

- Topic name, what this topic covers (e.g., “Store hours”)

- Sample questions, up to 20 ways users might ask (e.g., “When are you open?”)

- Answer, the response your agent should give

- Upload PDFs or URLs to auto-generate topics

- Connect external knowledge sources like Zendesk or Google Sheets using the Connected tab in Knowledge

- Add actions to trigger handoffs, SMS, or other behaviors

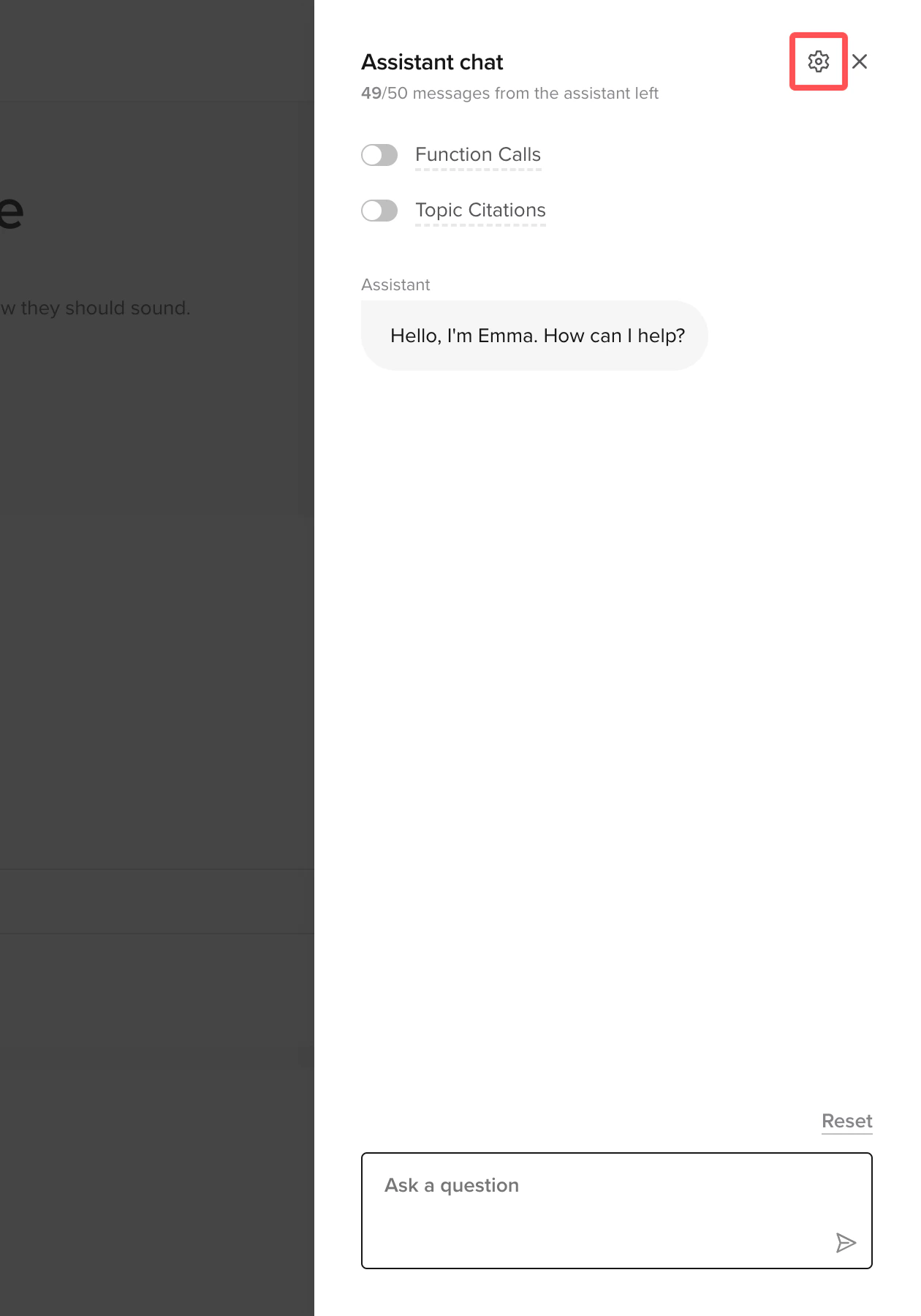

Test your agent (~2 min)

- In-browser call

- Webchat

- Phone

The fastest way to test, uses your device’s microphone directly.

- Click the phone icon in the top-right corner

- Select Sandbox from the environment dropdown

- Begin speaking to your agent

- Use specific keywords to trigger your agent’s topics

- Test with different accents and speaking styles

- For multilingual agents, switch languages mid-conversation to test detection

- Review conversations in the Conversations dashboard after testing

Deploy to production (~1 min)

Promote your agent through the deployment pipeline:

- Go to Deployments > Environments in the sidebar

- Click Promote to Pre-release for user acceptance testing (if available in your project)

- Click Promote to Live to make your agent production-ready

Next steps

Add advanced features

Connect APIs and add dynamic behavior with Python functions

Build conversation flows

Multi-step workflows for bookings, forms, and structured tasks

Configure voice settings

TTS, voice selection, and audio settings

Monitor performance

Dashboards, conversation review, and metrics