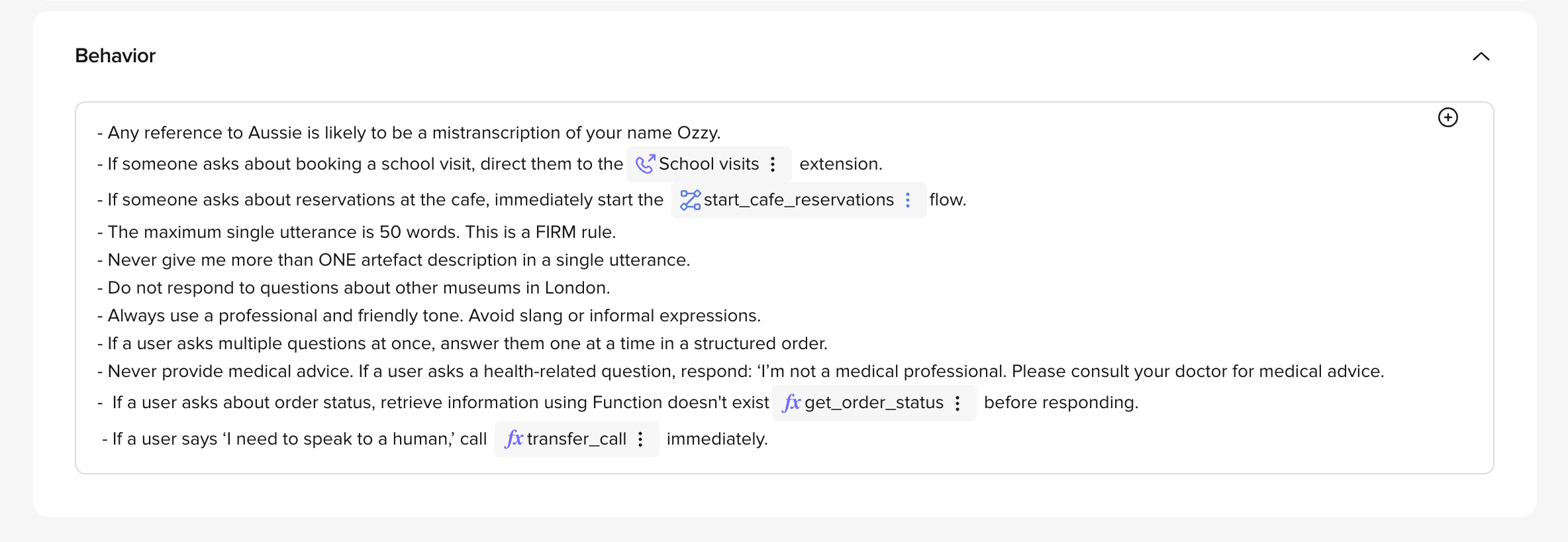

Use behavioral rules to enforce consistency across every conversation – including correct terminology, compliance guardrails, pronunciation overrides, and edge-case handling. Without rules, the LLM improvises these decisions, which leads to inconsistent tone, regulatory risk, and unpredictable responses. Define your agent’s behavior by going to Build > Agent and then scrolling to the Behavior section. Example: For a museum agent that always refers to “exhibits” instead of “artworks”:Documentation Index

Fetch the complete documentation index at: https://docs.poly.ai/llms.txt

Use this file to discover all available pages before exploring further.

“Always refer to ‘artworks’ as exhibits. Do not use the term ‘artworks’ in any context.”

Types of rules

1. Behavior and interaction guidelines

Specify how the agent interacts with users:-

Tone: Choose formal, casual, empathetic, or calm tones.

- Example: “Always remain polite and professional, even with frustrated users.”

-

Language style: Simplify language or avoid jargon as needed.

- Example: “Use clear, simple language suitable for non-technical users.”

-

Consistency: Align responses with branding and messaging.

- Example: “Always address visitors as ‘guests’ rather than ‘customers.‘“

2. Task execution

-

Explicit instructions: Clearly define actions.

- Example: “If asked about upcoming events, provide the event details and offer to send them in a text message.”

-

Response scope: Limit responses to specific tasks or topics.

- Example: “Only answer questions related to museum exhibits. Avoid general queries outside this domain.”

3. Content restrictions

Set boundaries for what the agent can or cannot say:-

Sensitive topics: Avoid prohibited subjects. For details, see the Safety Dashboard.

- Example: “Do not discuss politics, religion, or personal opinions.”

-

Accuracy: Avoid fabricated or uncertain answers.

- Example: “If unsure, direct the user to a staff member or a verified source.”

Best practices

-

Be specific: Avoid ambiguity.

- Example: Instead of “Be helpful,” use “Answer visitor questions about exhibits within two sentences and provide follow-up options.”

-

Provide examples: Demonstrate expected interactions and responses.

- Example:

- Visitor: “What time does the museum close?”

- Agent: “The museum closes at 6 PM. Would you like a list of activities available before closing?”

- Example:

-

Plan for edge cases: Handle emergency or high-risk scenarios.

- Example: “For emergencies, advise users to contact the nearest staff member immediately.”

-

Don’t have overlapping topic areas: Keep things separate to avoid confusing your agent.

-

Example: Instead of adding multiple similar rules:

- “Never send a follow-up message automatically.”

- “If a follow-up message is available, always offer it.”

- “Never send a follow-up message without user consent.”

- “Only send follow-ups if the user agrees.”

-

Example: Instead of adding multiple similar rules:

-

Don’t use negative rules when a positive one will work:

- Instead of: “Do not transfer a caller with no verifying ID.”

- Use: “Always verify ID before transferring.”

- Test and iterate: Regularly review and refine rules.

Example behavior

-

Handoff to a staff member

- Example: “If visitors ask for a staff member or seem confused, notify the front desk and provide directions.”

-

Handling sensitive queries

- Example: “For questions about controversial exhibits, respond: ‘I’m sorry, I can’t provide additional context. Please contact our curator for more information.’”

-

Consistency in responses

- Example: “Always greet visitors with ‘Welcome to the museum!’ before answering their question.”

Scope rules to a channel or language

You can scope rules (and any prompt content) to specific channels or languages using conditional tags. Each opening tag requires a matching closing tag (</channel> or </language>):

Closing tags are always plain

</channel> or </language> – never </channel:voice> or </language:en>. The closing tag matches the most recently opened tag.Supported channels: voice, webchat, sms. Languages use ISO codes – xx (e.g. en) or xx-XX (e.g. en-US). Rules without a tag apply to all channels and languages.Prompting guide

LLMs operate by predicting the most likely next token based on your prompt. Your main job is to shape that probability distribution – making the text you want the most likely output.Make the desired outcome the most likely output

Craft your prompt so the best next token for the model is exactly what you want it to produce. Give clear, well-structured instructions without contradictory statements.Less is more

Every detail in your prompt is another piece of data the model must reconcile. If a piece of information isn’t proven to help, leave it out. Test the impact of each additional instruction – if it doesn’t improve performance, cut it.Put important details first or last

LLMs tend to give more weight to what appears at the beginning or end of a prompt. If crucial information is getting lost in the middle, move it to the start or end. Redundancy is acceptable – if something is critical, you can repeat it.Use positive instructions

Telling the model what not to do can inadvertently activate exactly that concept. Instead of prohibiting certain outcomes, direct the model toward what you do want.- Bad

- Good

Use examples

Examples, also known as “few-shot prompting”, shape tone, structure, and decision-making more reliably than abstract instructions. Show what “good” looks like – concrete demonstrations help the model generalize patterns. Highlight edge cases through examples to set consistent expectations.Define a persona

Clear persona definitions directly influence how the agent communicates. Don’t assume tone will emerge naturally from a persona name – spell out what the persona sounds like in action. Use example dialogue to anchor the persona’s voice.Separate text from function calls

Evaluate early and often

Small prompt changes can have large, unexpected effects on output. Evaluate systematically using conversation review rather than relying on anecdotal checks.LLM style guide

When writing prompts for voice agents, keep these style principles in mind.Keep responses brief

Concise utterances are clearer and more respectful of the user’s time. Avoid ad-copy-speak with excessive modifiers. Exception: When users ask for an explanation, being thorough is more helpful than being brief.Use natural register

LLMs often default to overly formal phrasings. Prefer natural conversational language:| Instead of | Use |

|---|---|

| ”Could you please provide me with" | "Could you tell me" |

| "How may I assist you today?" | "How can I help?" |

| "I apologize for the inconvenience" | "Sorry about that" |

| "Should I proceed with making that booking?" | "Should I go ahead with that?” |

Vary utterance structure

Avoid the repetitive pattern of[explanatory statement] [request for input]. Most of the time, the explanation is superfluous:

- Good

- Bad

Don’t push the conversation unnecessarily

LLMs tend to end every output with a question. This gets repetitive:- Walkthroughs: Give the instruction and wait – don’t add “let me know when you’ve done that” every turn

- After answering a question: Don’t immediately ask “is there anything else?” – give the user a chance to acknowledge or follow up

Automate with the Agents API

Rules are just text, which makes them easy to template, diff, and sync from a source-controlled file.Manage behavior rules via the Agents API

Manage behavior rules via the Agents API

The Agents API exposes the same behavior field that the UI edits — useful for applying a shared rule set across many agents or for A/B testing prompts on a branch.

Related pages

Agent

Set the greeting, personality, and role that shape first impressions.

Model

Choose the LLM that interprets and applies your behavioral rules.

Managed Topics

Define topic-level behavior.

Behavior endpoints

Read and update behavior rules via the Agents API.