Monitor flagged conversations and evaluate how your agent handles harmful content. Without regular safety reviews, harmful content can reach users or trigger compliance violations you only discover after the fact.Documentation Index

Fetch the complete documentation index at: https://docs.poly.ai/llms.txt

Use this file to discover all available pages before exploring further.

Metrics

| Metric | What it shows |

|---|---|

| Caller utterance risk level | How risky incoming messages are and how well the agent manages them |

| Total calls | Total call count during the selected period |

| Calls managed for risk | How often safety filters were triggered (count and percentage) |

| Distribution of flagged calls | Trends in flagged calls over time |

| Caller utterance category | Breakdown by hate, self-harm, sexual content, and violence |

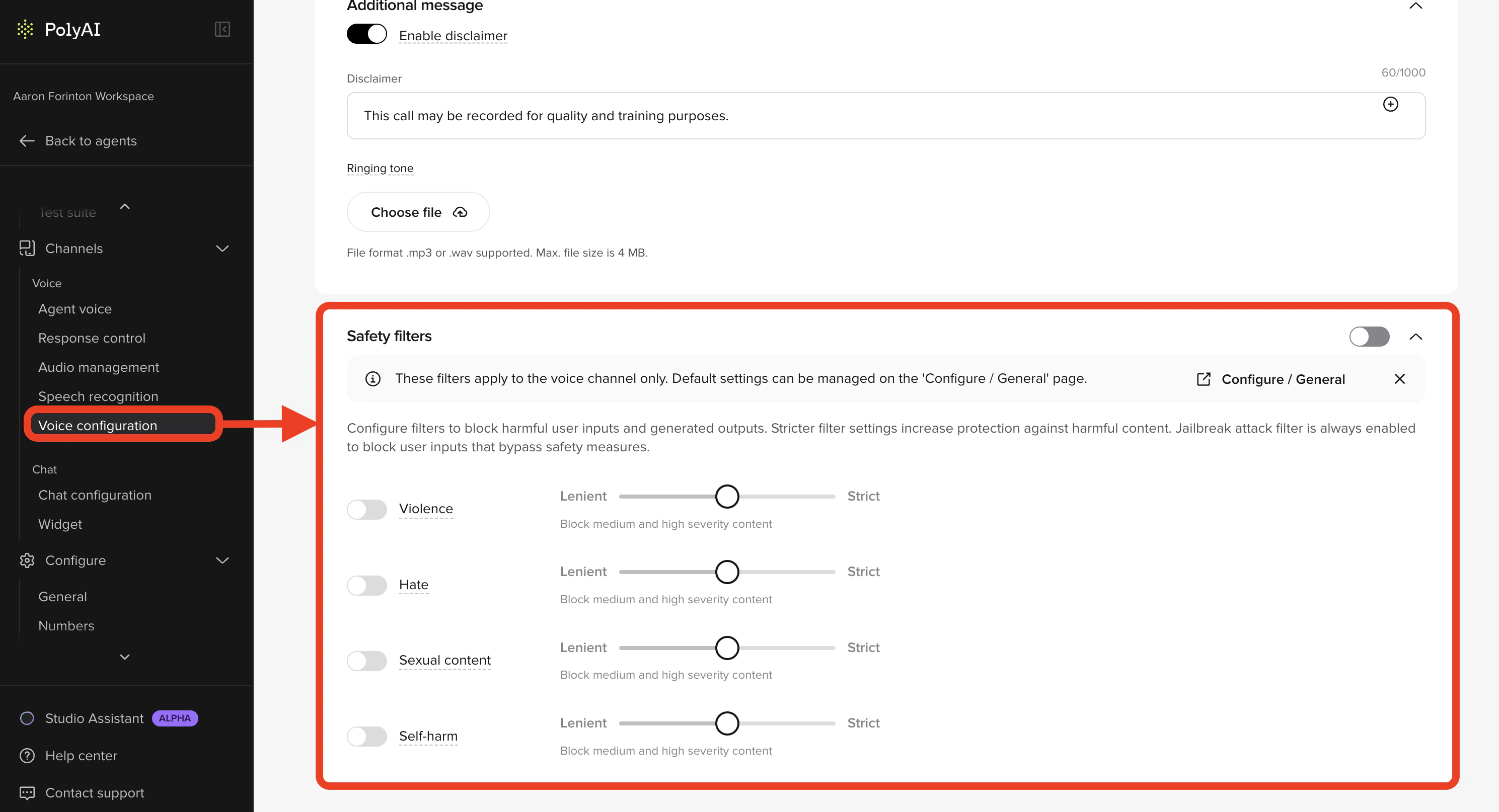

Editing safety filters

Safety filters are configured on a per-channel basis. Each channel (voice and chat) can have its own filter settings that override the project-wide defaults.- Voice channel – go to Channels > Voice > Voice configuration

- Chat channel – go to Channels > Chat > Chat configuration

- User input – catches toxic or inappropriate speech before it reaches the agent

- AI output – prevents the agent from responding with anything unsafe

Filtering categories and severity levels

Each category can be set to a different severity level:| Severity | Behavior |

|---|---|

| Lenient | Block high severity content only |

| Moderate | Block medium and high severity content |

| Strict | Block low, medium, and high severity content |

Hate

Hate

Content that attacks or discriminates based on race, ethnicity, nationality, religion, gender identity, sexual orientation, disability, or appearance. Includes bullying, harassment, and slurs.

Sexual

Sexual

Content involving explicit anatomy, sexual acts, or romantic/erotic themes – including abusive or exploitative content.

Violence

Violence

Physical harm, threats, weapons, terrorism, and other violent acts or intimidation.

Self-harm

Self-harm

Mentions of suicide, self-injury, eating disorders, or content about hurting oneself.

Language support

Filters have been trained and tested in: English, German, Japanese, Spanish, French, Italian, Portuguese, and Chinese. Other languages are supported but performance may vary – test thoroughly in your target language.Best practices

- Test thoroughly: Always run your own tests to validate how filters behave with your content.

- Use the right level: Don’t default to High – find a balance that avoids both harm and over-filtering.

- Be consistent: If you manage multiple agents or variants, use consistent flow and tool names across them to simplify reporting and comparison.

Related pages

Standard dashboard

Day-to-day performance monitoring: containment, call volume, and duration.

General settings

Configure safety filter severity levels for your project.

Conversation review

Inspect individual flagged conversations in full transcript view.