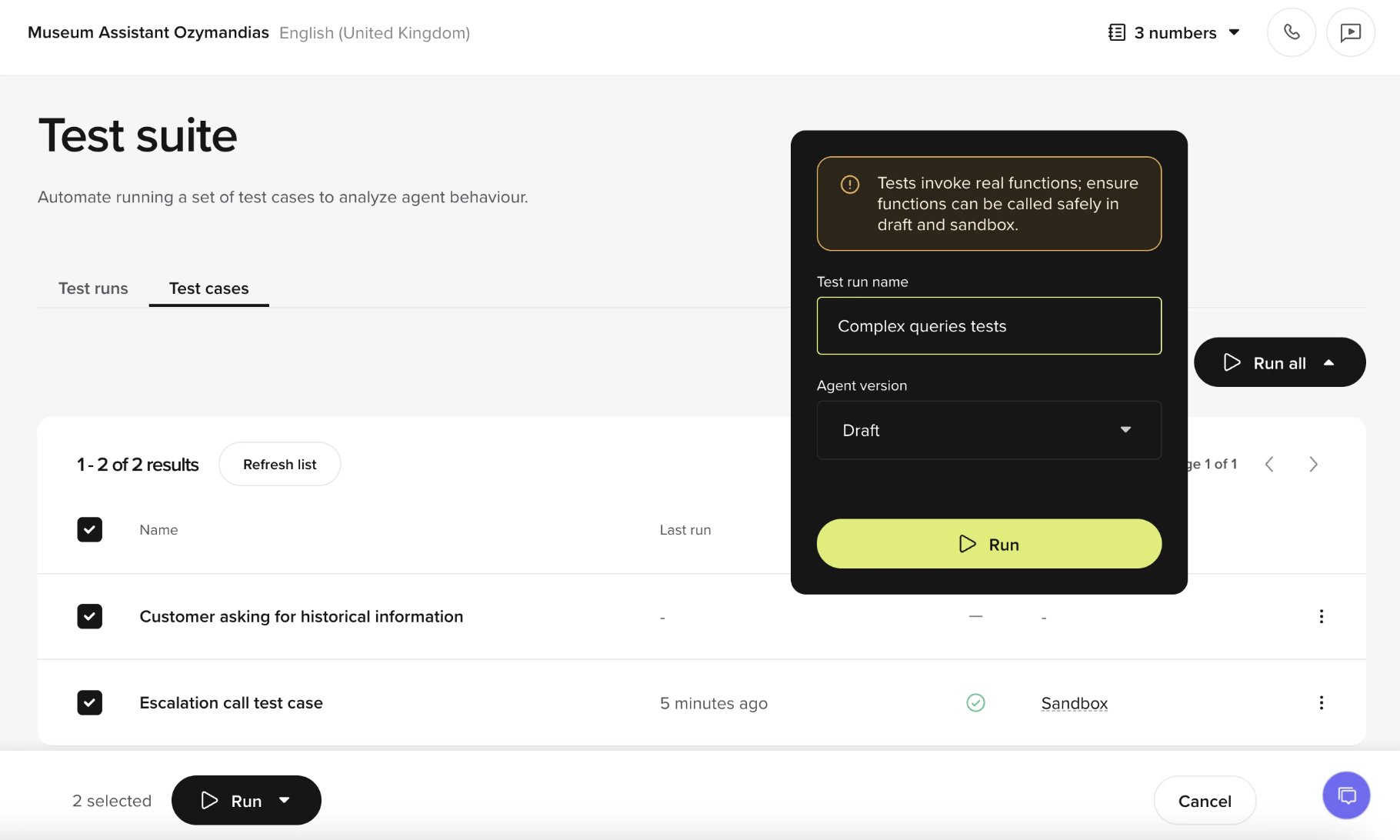

Use the test suite to save conversations as test cases, group them into sets, and re-run them against Draft or Sandbox after every change. Test cases and test sets are found under Build > Test suite.Documentation Index

Fetch the complete documentation index at: https://docs.poly.ai/llms.txt

Use this file to discover all available pages before exploring further.

Concepts

- Test Case A single scenario captured from a real conversation (user messages, agent replies, and the tools invoked). Each case tracks its Last run and Outcome.

- Test Set A named collection of Test Cases. Use sets to cover a feature area or release scope (for example, “Payments,” “Shipping,” “Core intents”). A Test Case can belong to multiple sets.

Test Cases and Test Sets run against non-production versions. Select Draft or Sandbox when you start a run.

Create a Test Case

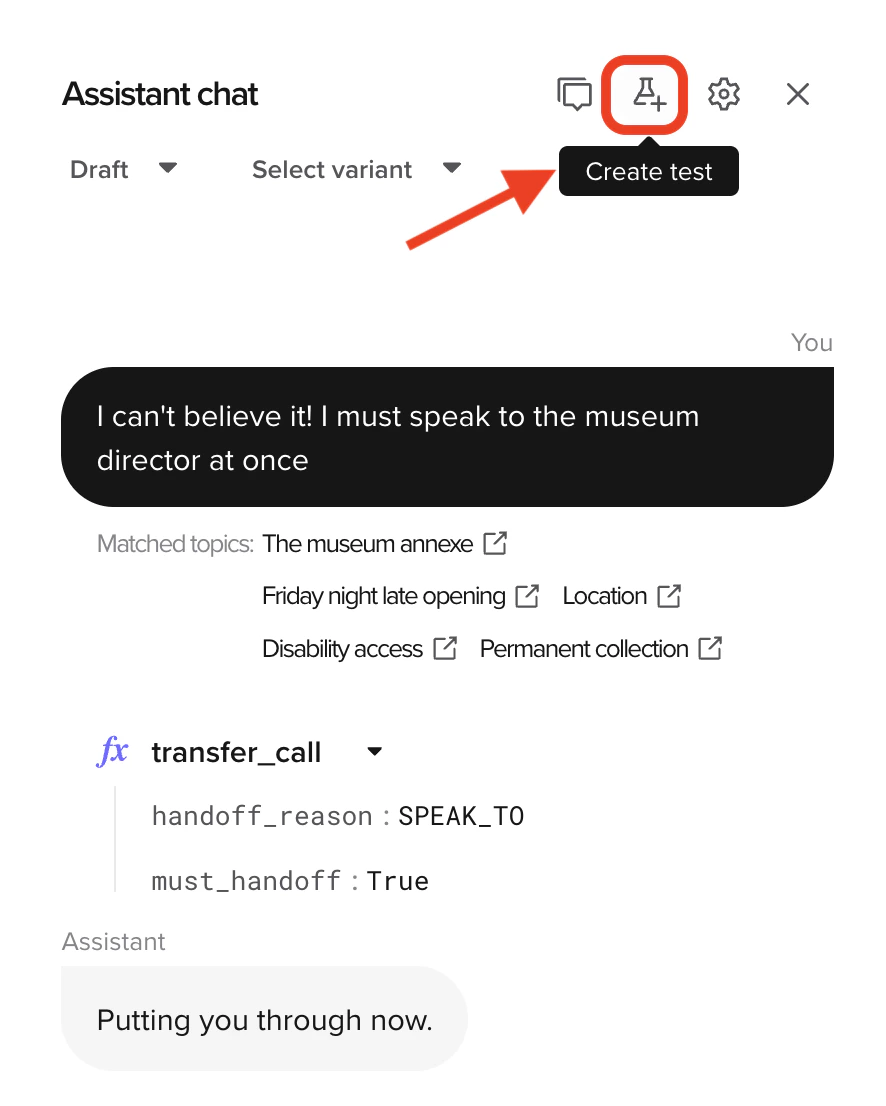

Save a test case from chat or Conversation review

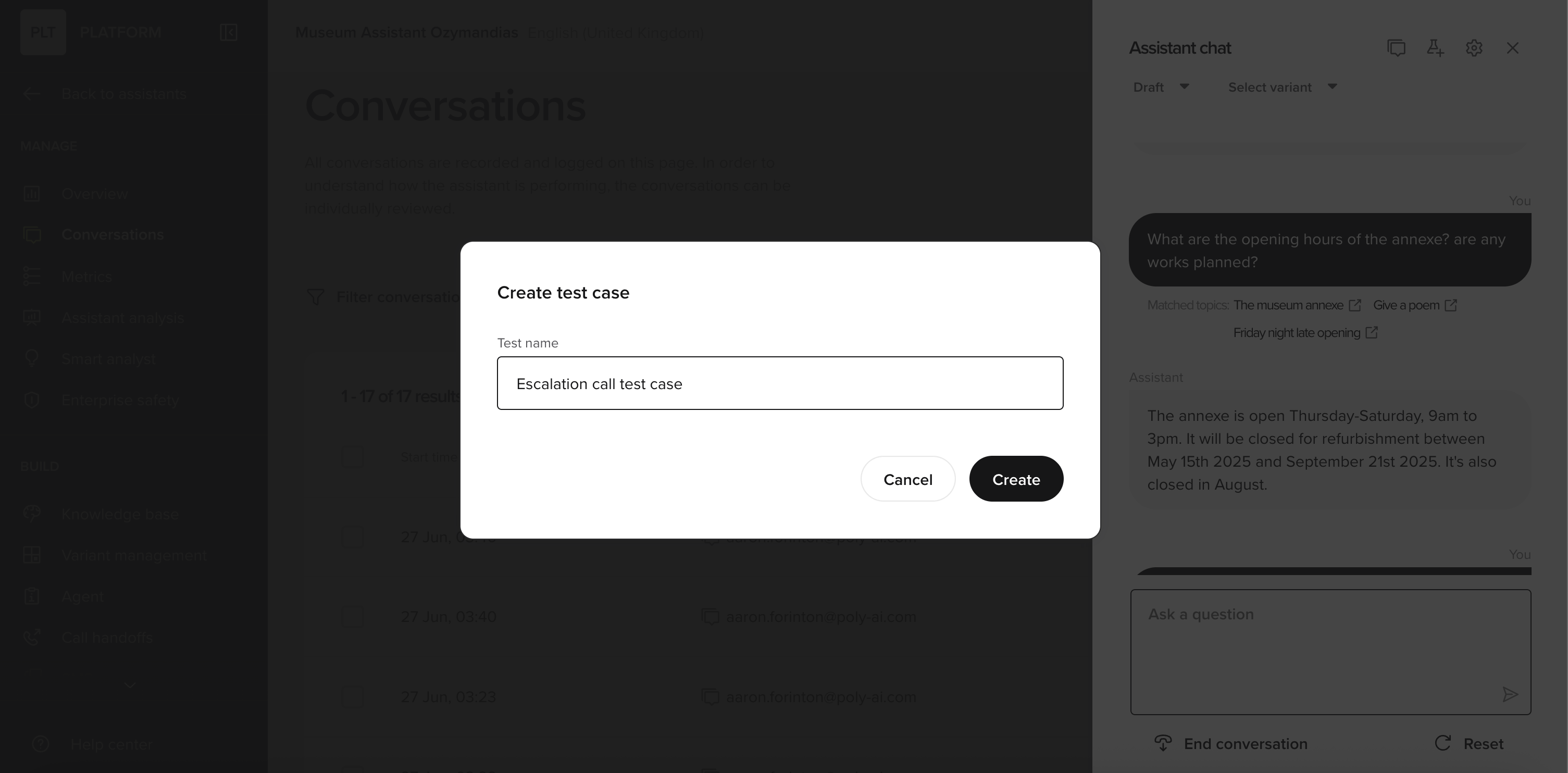

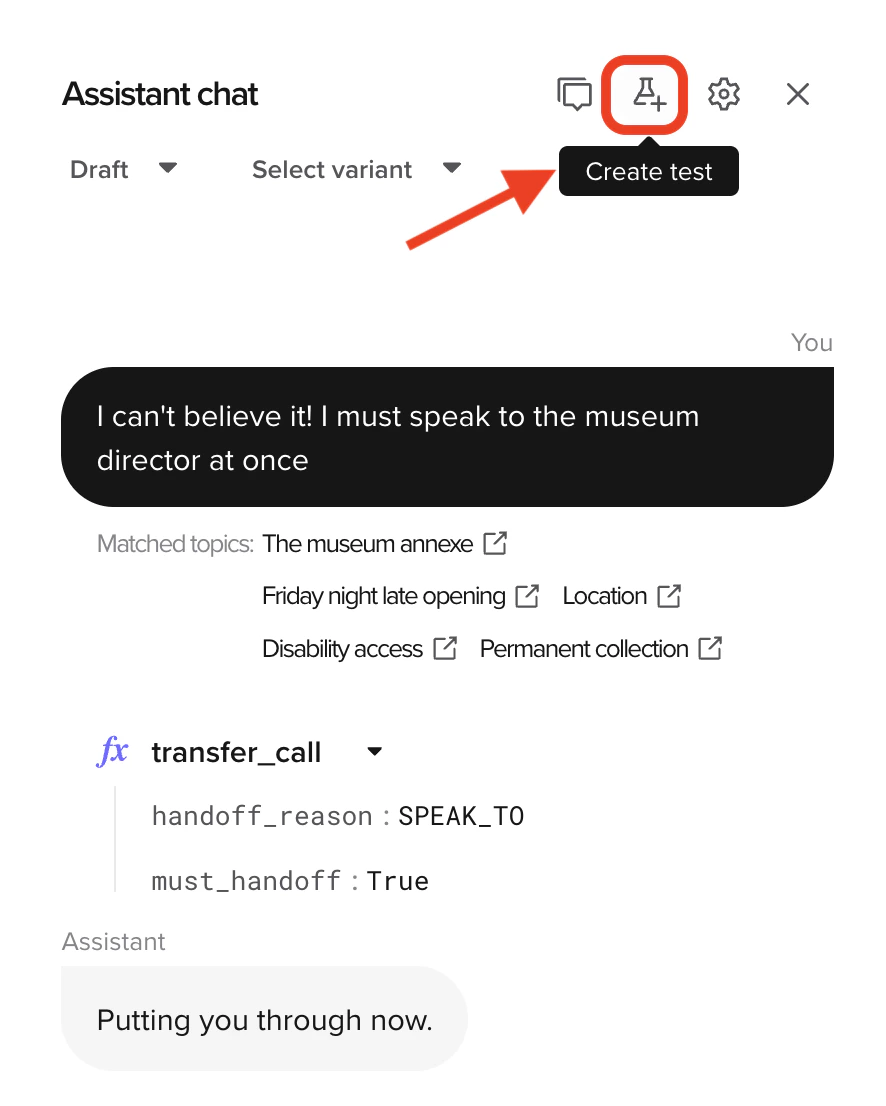

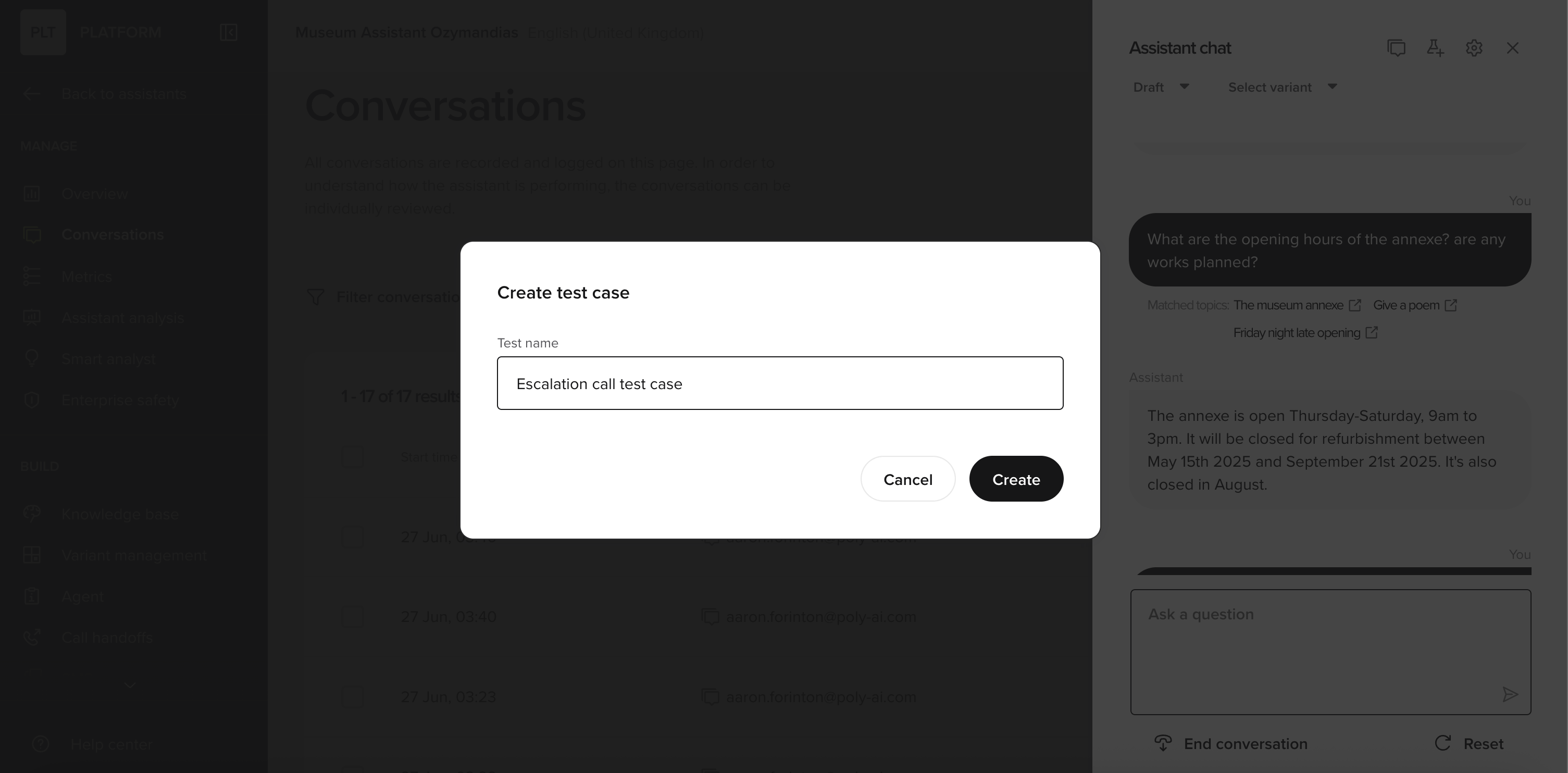

Click the Create test button (test-tube icon) in the chat panel or from a transcript in Conversation review.

Name the case and save it.

Create a Test Set

- Go to Build > Test suite > Test Sets and select New set.

- Give the set a name and add cases from the picker.

- A case can be added to more than one set (for example, both “Billing” and “Critical paths”).

Tip: Create focused sets (“Refunds,” “Shipping address changes,” “Escalations”) so failures point straight to the right area.

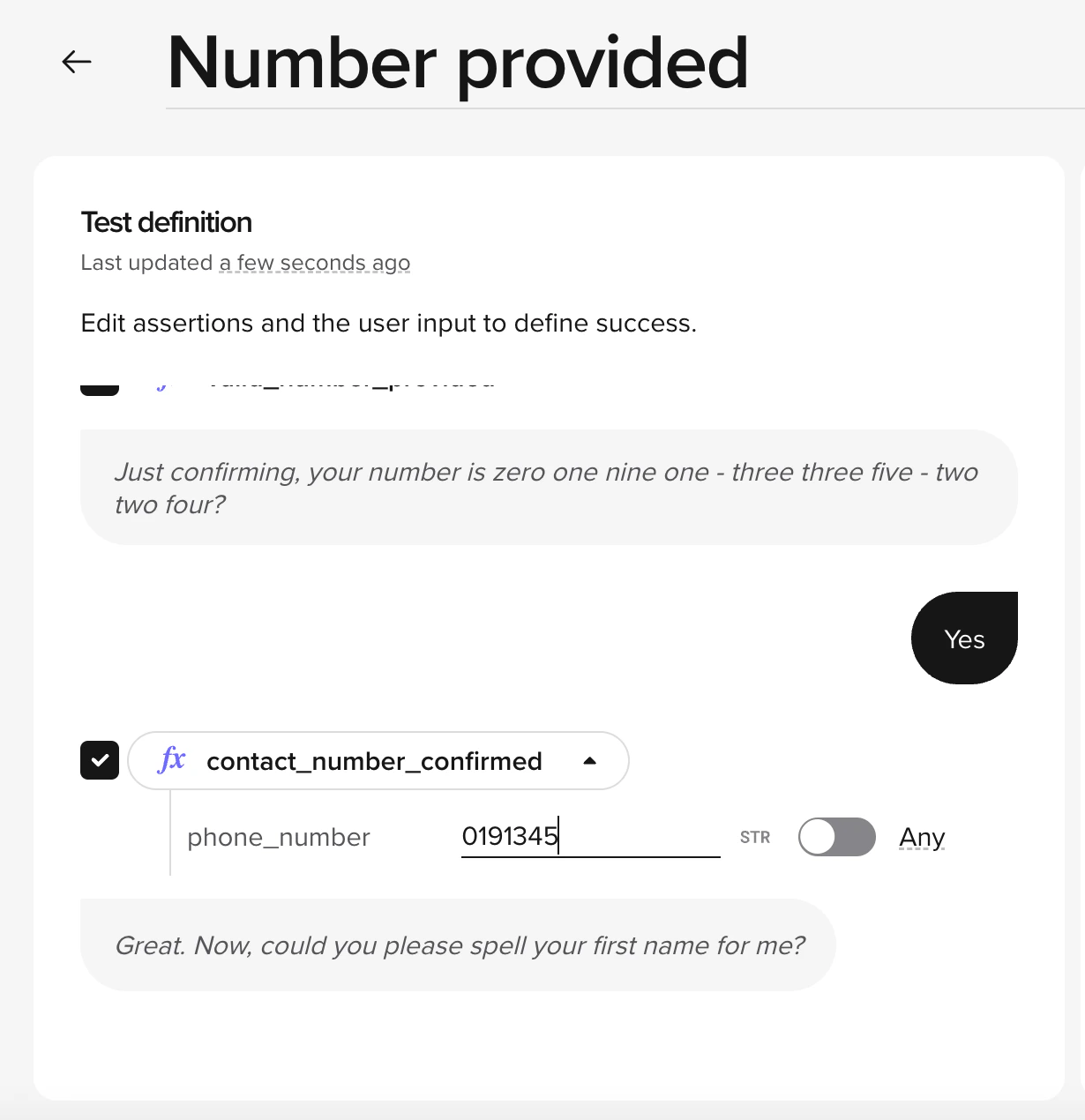

Edit test case parameters

Each test case stores the function call values from the original conversation. You can edit these to test variations of the same scenario without creating a new case.- Open the test case from Test Cases.

- Select the parameters you want to modify.

- Adjust values to simulate a different scenario – for example, change a date, customer ID, or location.

- Save the case.

Run tests

You can run a single case or an entire set.- Single case

- Test set

- Open the case in Test Cases.

- Choose Draft or Sandbox.

- Select Run to execute just this scenario.

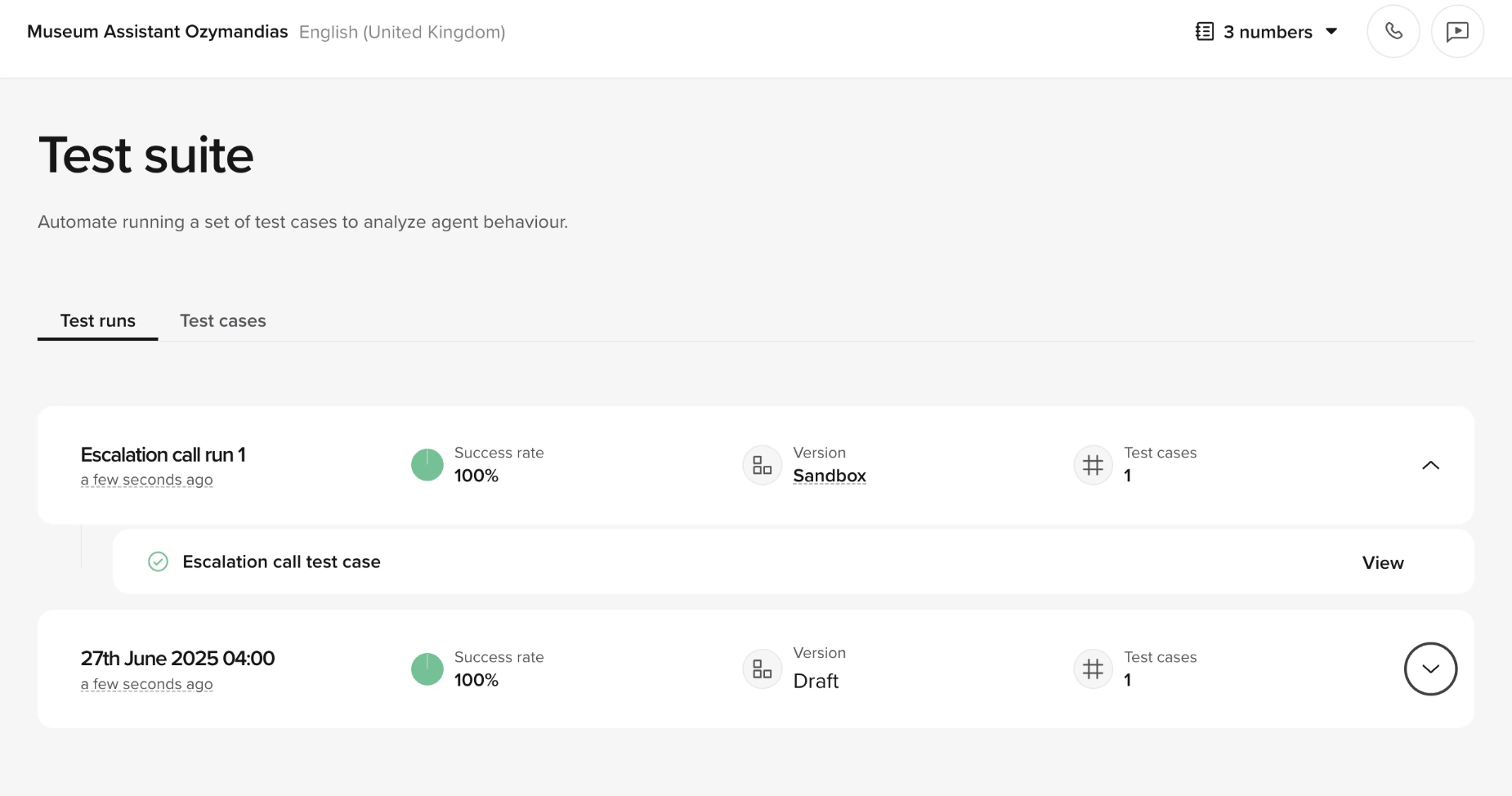

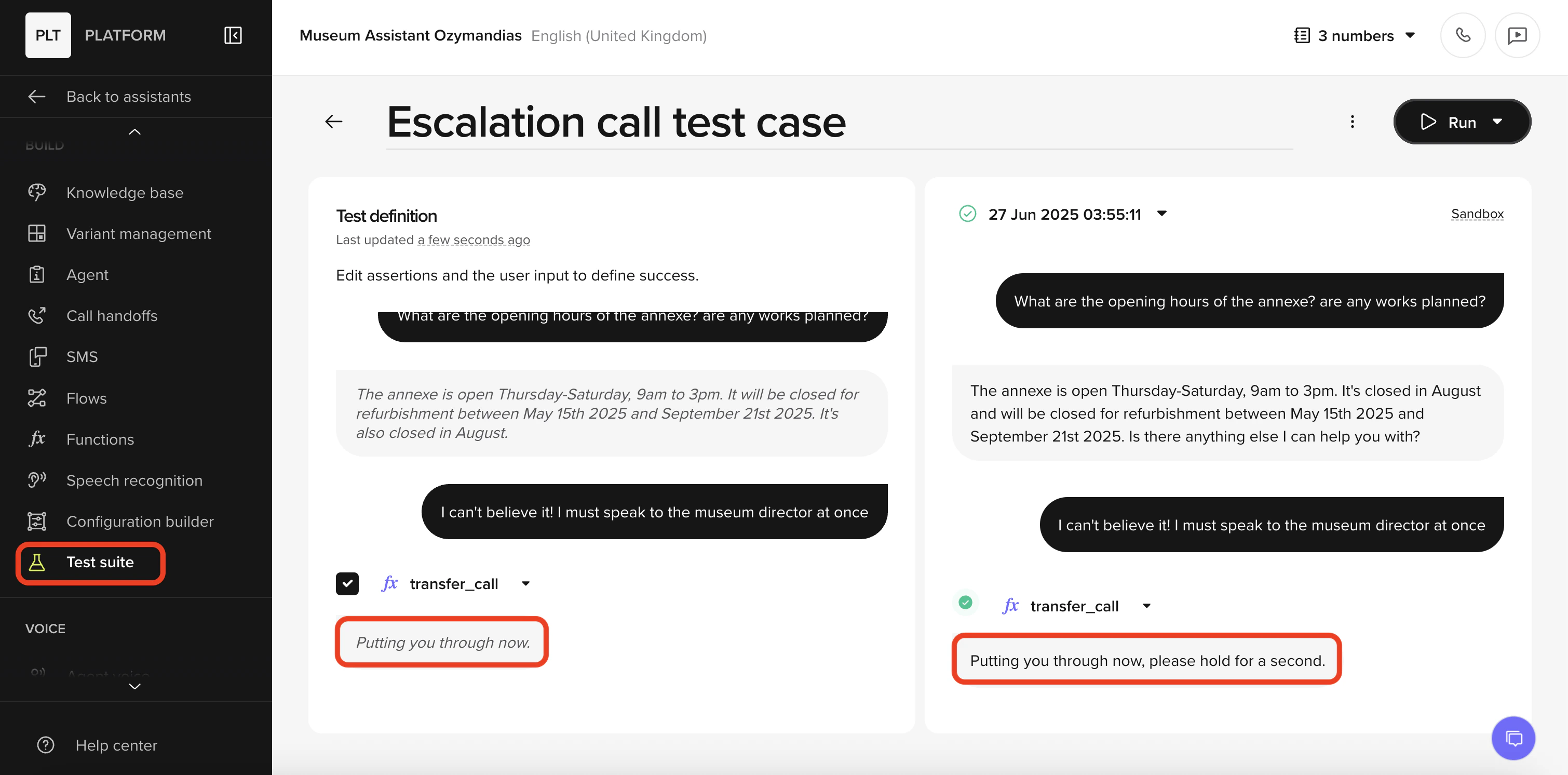

Review results

After a run completes, each test case shows:- Outcome – whether the case passed or failed compared to the expected behavior

- Last run – when the test was last executed

- Pass/fail counts – how many cases succeeded vs. failed in the run

- Trend charts – historical pass/fail rates across multiple runs, so you can spot regressions over time

- Knowledge topic changes that altered routing

- Function logic updates that changed return values

- Flow modifications that skipped or reordered steps

Best practices

- Name test cases descriptively – use names that describe the scenario, not the expected outcome (e.g. “Caller cancels booking” rather than “Test 1”)

- Create focused sets – group cases by feature area (“Refunds”, “Shipping”, “Escalations”) so failures point to the right area

- Cover happy paths and edge cases – include both successful flows and failure scenarios (invalid input, missing data, handoff triggers)

- Re-run after knowledge changes – topic edits can silently break other flows. Test sets catch this

- Use test cases from real conversations – save cases from Conversation Review to test against real-world scenarios

Automate with the Agents API

Test runs are most useful when they gate deployments. The Agents API gives you publish and promote actions you can chain behind a passing test set.Gate promotions on test results from CI

Gate promotions on test results from CI

Pair the test suite with the Agents API to build a safe deployment pipeline. A typical CI job publishes the current draft to Sandbox, runs the relevant test set, and only promotes to Pre-release if the set passes.

Related pages

Conversation review

Save test cases directly from transcripts.

Environments

Understand the Sandbox and Draft environments used for test runs.

Variant management

Run test sets against specific variants before promoting.

Deployments endpoints

Publish and promote from CI via the Agents API.